A new generation of the NLP Lab is now available: the Generative AI Lab. Check details here https://www.johnsnowlabs.com/nlp-lab/

We are very excited to announce that Annotation Lab 1.9.0 now expands the functionality of the Models Hub page by allowing users to download Classification Models, multilingual embeddings, and detailed information about Trained Models. Importing PDF and image files into Annotation Lab does not need users to do OCR outside of it. This version supports Document OCR directly from the Import page and the documents are imported as tasks. Another big improvement is the visual way of creating Project Config. Along with these features, there are many more performance improvements, UI enhancements, and bug fixes done in this version.

Here are some of the highlights:

Improved Setup Page

Even though the XML way gives more control for writing Labeling Config for a Project, we have introduced an easier way to define common project types. In the Labeling Config section of the Project Setup page, users can toggle between Visual and XML Code mode. With the new Visual mode, users can create basic NER Labels and Classification Choices without having to write XML for them. The name attribute for Labels and Choices tags and the background color of each label are auto-generated. To change these or add other things in the config, we can switch to XML Code mode which is a much more powerful/flexible way as always. We plan to keep enhancing the capabilities of Visual mode.

Another great improvement in Setup Page is the time it would take to validate the Labeling Config against all existing annotations. Previously, when there was a big number of tasks with completions, the Setup Page would take a long time to reload or to get saved. On v1.9.0, we tested on 4k+ tasks and found that it is almost 25 times faster than previous versions.

Spark OCR integration

With the addition of Spark OCR pipelines, texts present in PDF and image files can directly be imported into Annotation Lab. On the import page of a Project, Project Owner or Manager can enable the ocr-ing of supporting documents. At the moment, pdf, jpeg, jpg, png, and zip file including multiple files of exactly one extension is supported for doing OCR.

This feature is available only when a valid Spark OCR license is present in the Annotation Lab setup.

Upgrade to Spark NLP v3.0.2

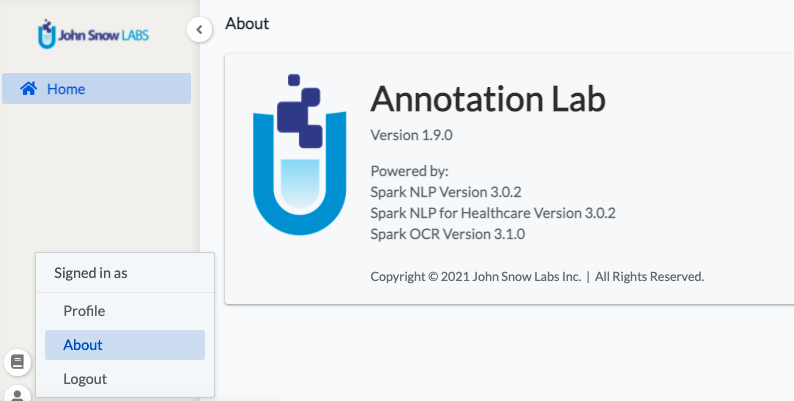

As many cool features of Annotation Lab are powered by Spark NLP and Spark NLP for Healthcare, we are always trying to catch up with the latest versions of the libraries. This version of Annotation Lab upgrades these two libraries to v3.0.2 and along with the integration of the OCR feature, Spark OCR v3.1.0 is included. This information is available on the About Page.

Models Hub

The Models Hub page in Annotation Lab was introduced from v1.8.0 which makes models available on https://nlp.johnsnowlabs.com/models page easily downloadable and be used for preannotating documents. We had only supported the NER models in the previous version.

With the new version, Annotation Lab supports downloading classification models too, along with the embeddings they use for preannotation.

Previously only NER models and embeddings could be uploaded. With this release, uploading of any Spark NLP classification models trained outside of Annotation Lab and compatible with v3.0.2 is also supported.

The downloaded or uploaded models will be available in the Spark NLP Pipeline Config section on the Project Setup page and can be deployed as in the previous versions.

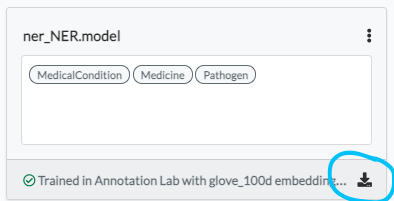

In the Available Models tab on Models Hub Page, training logs can be downloaded by clicking the download icon (present at the bottom of each Model Box) and other information related to the Trained Models (ones trained inside Annotation Lab) are also present.

With the upgrade of Spark NLP and Spark NLP for Healthcare libraries, many new NER Models and embeddings compatible with these libraries are listed on the page. Multilingual embeddings are also added and can be downloaded from the Models Hub page. Any incompatible models are removed from the list.

Models Training

Even though training logs could be downloaded at the end, there were no logs available during the training which made it difficult for users to track the progress of training and what exactly went wrong in case of failure. But now users can easily click on the live log button present in the model training section on the Setup page.

Schedule Database Backup

To help develop a disaster recovery plan, we have introduced a backup feature for databases used by Annotation Lab. To schedule the backup, we can specify the necessary parameters while installing or upgrading to this version. The database dump is sent to the Amazon S3. For detailed instruction, please read the “instruction.md” file included with the installation artifacts of this release.

More great features

The page numbers present in Labeling Page for Paginated tasks were not looking good for the single completion view and completions comparison view. Also when all completions were deleted in Labeling Page it was showing an unnecessary page. These are now fixed.

Each release of Annotation Lab has been backed up by tests cases. We have increased the number of tests cases drastically this time, which now counts almost 450 in total for two supported browsers (Chrome and Firefox).

Bugfixes

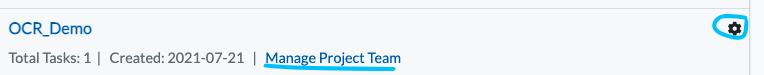

On the Project List Page, two options namely the ‘Manage Project Team’ and the ‘Go to setup page’ option for Manager user of a Project was missing.

Even though there were no completions, the training was allowed to be triggered. This would lead to the unnecessary creation of training resources which would eventually fail. Now there is validation added and a proper error message is shown next to the “Train Now” button on the Setup Page. If you are confident that there are enough training data but still the same message is seen, we recommend checking the filters applied in the Training Configuration window.

Some keyboard shortcuts on Labeling Page which were not working properly have been fixed.

Along with all the fixes, we have also fixed some issues present on the Swagger API page.