A new generation of the NLP Lab is now available: the Generative AI Lab. Check details here https://www.johnsnowlabs.com/nlp-lab/

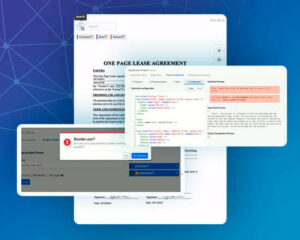

Annotation Lab improves the performance of the Project Setup Page, adds a “View as” option in the Labeling Page, improves the layout of OCR-ed documents, adds the option to stop training and model server deployment from UI.

Many more cool features are also delivered in this version to enhance usability and stabilize the product. Here are details of features and bug fixes included in this release.

Performance improvement in Setup page

In previous versions of Annotation Lab, changes in Project Configuration would take a long time to validate if that project included a high volume of completions. The configuration validation time is now almost instant, even for projects with thousand of tasks. Multiple tests were conducted on projects with more than 13K+ tasks and thousands of extractions per task. For all of those test situations, the validation of the Project Configuration took under 2 seconds. Those tests results were replicated for all types of projects including NER, Image, Audio, Classification, and HTML projects.

Introduce “View as” option in the labeling screen

When a user has multiple roles (Manager, Annotator, Reviewer), the Labeling Page should present and render different content and specific UX, depending on the role impersonated by the user. For a better user experience, this version adds a “View as” switch in the Labeling Page. Once the “View as” option is used to select a certain role, the selection is preserved even when the tab is closed or refreshed.

Note: This behavior is reflected in the Task List page too.

OCR Layout improvement

In previous versions of the Annotation Lab, layout was not preserved in OCRed tasks. Recognized texts would be placed in a top to bottom approach without considering the paragraph each token belonged to. From this version on, we are using layout-preserving transformers from Spark OCR. As a result, tokens that belong to the same paragraph are now grouped together, producing more meaningful output.

Input Image:

OCR Result

Ability to stop training and model server deployment

Up until now, training and model server deployment could be stopped by system admins only. This version of Annotation Lab provides Project Owners/Managers with the option to stop these processes simply by clicking a button in the UI. This option is necessary in many cases, such as when a manager/project owner starts the training process on a big project that takes a lot of resources and time, blocking access to preannotations to the other projects.

Miscellaneous

Display meaningful message when training fails due to memory issues

In case the training of a model fails due to memory issue, the reason for the failure is available via the UI (i.e. out of memory error).

Allow combining NER labels and Classification classes from Spark NLP pipeline config

The earlier version had an issue with adding choice from the predefined classification model to an existing NER project. This issue has been fixed in this version.

Along with all these features, numerous APIs are added in the Swagger Docs.