In our previous article we discussed the problem of underrepresented languages in NLP, and how John Snow Labs collaborated with the community to facilitate the use of the open-source Spark NLP library in over 190 languages.

Part of these advancements of Spark NLP was the inclusion of languages with very different structures, such as Arabic, Persian or Urdu.

In this post, we’ll dive deeper into the use of Spark NLP for the Arabic language, which is the fourth most used language on the internet, and thus deserves special attention.

Characteristics of the Arabic language

Arabic is a complex language with a very unique structure. Using NLP to analyze text in Arabic is, therefore, a very different endeavor than understanding the text in English or Latin languages. Here are some characteristics that make Arabic particularly distinct from other languages:

- Words are written from right to left.

- Arabic has MANY regional dialects, which are often incomprehensible to each other.

- It’s a very rich language with many words describing a similar thing. For example, there are 14 stages of love alone, depending on how strong the feelings are, and there are hundreds of words in the Arabic language for one as common as “camel”.

- Capitalization and abbreviations don’t exist in Arabic.

- Complex and unusual methods of constructing words from a basic root and direct translation is very difficult without considering the context of the sentences and words around them.

- Short vowels are not prominent in the Arabic language, and often not written. For example, maktab (office) is written as mktb, omitting the vowels, much like stenographic shorthand.

Challenges of using NLP to understand Arabic

The characteristics mentioned above make it particularly hard to apply existing NLP models to Arabic and provide a burden for innovation in NLP to understand Arabic languages. On top of that, big, high-quality datasets needed to train the models are limited, and those datasets that are publicly accessible are mostly only found in Arabic search engines and are therefore hard to find for English-speaking researchers and data scientists.

Despite the challenges of developing NLP models to analyze Arabic, John Snow Labs has set a special focus on this research area to contribute to a much more efficient understanding of text written in Arabic, such as medical reports or important historic documents. Having NLP models for Arabic in place enables researchers and scientists around the globe to extract relevant information directly from the original documents instead of translating them first to English or another language and then running it through the model. This positively affects the accuracy when extracting entities in text.

Developing Spark NLP models for Arabic

Of all the libraries considering underrepresented languages, Spark NLP is probably the largest, and it was obvious for John Snow Labs that it should support the Arabic language as well, despite its complexity. The team began training the model for certain essential NLP tasks including named entity recognition and translation. With the support of internal collaborators who spoke Arabic, John Snow Labs also worked closely with Arabic speaking members of the Spark NLP community who contributed to the development of the models. The existence of vast amounts of textual material written in Arabic was used to efficiently train the models and measure their accuracies.

In comparison to the analysis of languages written from left to right, Arabic required an approach beyond simple rule-based methods. Common tasks such as text simplification to identify the root of a specific word or comma-based rules were no longer reliable. Multiple machine learning and deep learning techniques were used instead to develop the models for these new supported languages, including Hebrew, Persian and Urdu.

Tasks supported by Spark NLP for Arabic

Today, Spark NLP has 45 pre-trained models that can annotate data provided in Arabic. Supported tasks include:

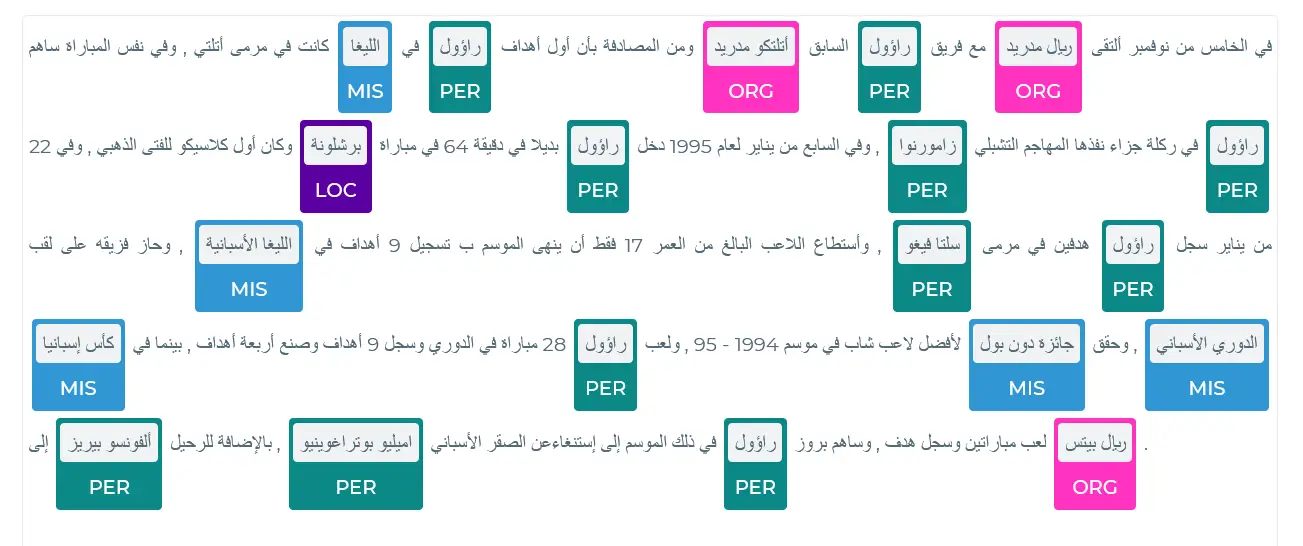

- Named entity recognition -> extract key elements from text and classify them into predefined categories.

- Translation -> translating Arabic to English, Spanish, Hebrew, Greek, French, and many more languages.

- Word and sentence embeddings -> detecting text vectors for sentiment analytics, text classifications, Named Entity Recognition (NER)

- Stop words removal -> filtering out irrelevant words before processing the analysis of the text, such as prepositions, pronouns, conjunctions, etc.

- Part-of-Speech (POS) -> assigning parts of speech to each word, such as noun, verb, adjective, etc.

- Lemmatization -> method that switches any kind of a word to its base root mode.

You can check out the live demo and notebook here to see how entities in Arabic text are recognized using Spark NLP.

Some simple and popular tasks, such as text classification, sentiment analysis, or summarization are still missing. The problem is not that these are too hard to train or that datasets are missing but with 190 languages supported by Spark NLP, it’s a question of prioritization. Before extending a model with further tasks and putting resources into it, it is evaluated what tasks are actually needed in a specific language to maximize the benefits of the library for the community. Therefore, it’s only a matter of time until the models will support the full range of NLP tasks and entirely keep up with the models trained in English.

Join the Spark NLP community on Slack to take part in the conversation and share your opinion about what tasks and languages should be supported next.