Version 1.19.0 of the library has an optimized sentence embedding model for RAG application in the Finance domain and aspect-based sentiment analysis of financial entities in context.

Sentence Embedding Model

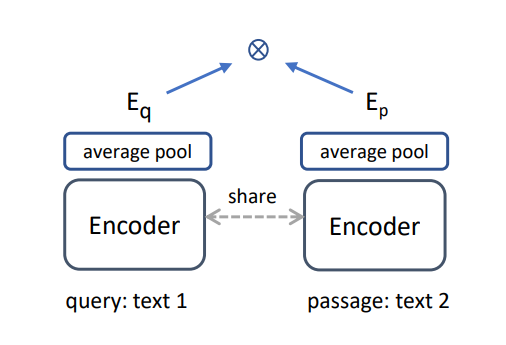

The new sentence embedding model expands the capabilities of the library for Retrieval Augmented Generation (RAG) applications and the capability to train text classification models. The E5 model obtains one of the best performances on standard NLP benchmarks, and he provides a Finance-specific fine-tuned version.

Source: Wang, Liang, et al. “Text embeddings by weakly-supervised contrastive pre-training.” arXiv preprint arXiv:2212.03533 (2022).

To use the model on a Spark NLP pipeline, download the pretrained model:

document_assembler = (

nlp.DocumentAssembler().setInputCol("text").setOutputCol("document")

)

E5_embedding = (

nlp.E5Embeddings.pretrained(

"finembedding_e5_base", "en", "finance/models"

)

.setInputCols(["document"])

.setOutputCol("E5")

)

pipeline = nlp.Pipeline(stages=[document_assembler, E5_embedding])

Then, we can transform texts into vector representations with:

data = spark.createDataFrame(

[["What is the best way to invest in the stock market?"]]

).toDF("text")

result = pipeline.fit(data).transform(data)

We can then obtain the vector arrays with:

result.select("E5.embeddings").show(truncate=100)

Obtaining:

+----------------------------------------------------------------------------------------------------+ | embeddings| +----------------------------------------------------------------------------------------------------+ |[0.45521045, -0.16874692, -0.06179046, -0.37956607, 1.152633, 0.6849592, -0.9676384, 0.4624033, -0.7047892, ...| +----------------------------------------------------------------------------------------------------+

Aspect-based Sentiment Analysis

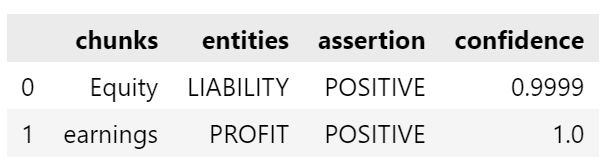

This is a release of two related models: One for Named-Entity Recognition (NER) that identifies financial entities in the texts and one for status assertion that classifies the entities in a sentiment (POSITIVE, NEGATIVE, or NEUTRAL).

The model was trained on earning call transcripts and aimed to identify relevant information (entities) and their financial aspects (financially positive, financially negative, or neutral).

To use the models, we create a Spark NLP pipeline with all the required stages:

documentAssembler = (

nlp.DocumentAssembler().setInputCol("text").setOutputCol("document")

)

# Split the document in sentences

sentenceDetector = (

nlp.SentenceDetector()

.setInputCols(["document"])

.setOutputCol("sentence")

)

# Tokenizer splits words in a relevant format for NLP

tokenizer = (

nlp.Tokenizer().setInputCols(["sentence"]).setOutputCol("token")

)

# Word Embedding model finetuned on SEC fillings

bert_embeddings = (

nlp.BertEmbeddings.pretrained("bert_embeddings_sec_bert_base", "en")

.setInputCols("document", "token")

.setOutputCol("embeddings")

.setMaxSentenceLength(512)

)

# The new NER model

finance_ner = (

finance.NerModel.pretrained(

"finner_aspect_based_sentiment_sm", "en", "finance/models"

)

.setInputCols(["sentence", "token", "embeddings"])

.setOutputCol("ner")

)

# Convert NER annotations to CHUNK

ner_converter = (

finance.NerConverterInternal()

.setInputCols(["sentence", "token", "ner"])

.setOutputCol("ner_chunk")

)

# The new Assertion model

assertion_model = (

finance.AssertionDLModel.pretrained(

"finassertion_aspect_based_sentiment_sm", "en", "finance/models"

)

.setInputCols(["sentence", "ner_chunk", "embeddings"])

.setOutputCol("assertion")

)

# Build the pipeline

nlpPipeline = nlp.Pipeline(

stages=[

documentAssembler,

sentenceDetector,

tokenizer,

bert_embeddings,

finance_ner,

ner_converter,

assertion_model,

]

)

# Fit on empty data to obtain the PipelineModel for predictions

empty_data = spark.createDataFrame([[""]]).toDF("text")

model = nlpPipeline.fit(empty_data)

We can use the model on a given text, for example:

Equity and earnings of affiliates in Latin America increased to $4.8 million in the quarter from $2.2 million in the prior year as the commodity markets in Latin America remained strong through the end of the quarter.

We can identify the entities and their sentiment:

Fancy trying?

We’ve got 30-day free licenses for you with technical support from our financial team of technical and SMEs. This trial includes complete access to more than 150 models, including Classification, NER, Relation Extraction, Similarity Search, Summarization, Sentiment Analysis, Question Answering, etc., and 50+ financial language models.

Just go to https://www.johnsnowlabs.com/install/ and follow the instructions!

Don’t forget to check our notebooks and demos.

How to run

Finance NLP is really easy to run on both clusters and driver-only environments using johnsnowlabs the library:

!pip install johnsnowlabs

from johnsnowlabs import nlp

nlp.install(force_browser=True)

Then, we can import the Finance NLP module and start working with Spark.

from johnsnowlabs import finance

# Start Spark Session spark = nlp.start()

For alternative installation methods of how to install in specific environments, please check the docs.