When MiBA built their oncology data curation pipeline, the technical challenge extended far beyond processing individual data types. Their system needed to ingest 1.4 million physician notes and approximately 1 million PDF reports and scans including pathology and radiology documents, extract temporal, anatomical, oncological, pathological, and treatment-related entities, identify 29.2 million relationships across 25 distinct relationship types, and enable clinical trial matching when cancer staging and biomarker findings are inclusion criteria.* This demonstrates why oncology artificial intelligence requires end-to-end pipelines, not isolated tools for individual tasks but integrated workflows processing multimodal data from ingestion through clinical decision support.

Modern oncology generates enormous data diversity: diagnostic imaging from radiology and pathology, genomic and molecular profiling, laboratory tests, clinical notes documenting histories and treatment plans, radiation therapy documentation, and follow-up outcomes. This data richness enables precision medicine but creates operational bottlenecks. Extracting, coding, normalizing, and linking information from disparate sources into structured form is time-consuming, expensive, and cannot scale to the data volumes contemporary oncology produces. End-to-end artificial intelligence pipelines that automate this abstraction process become not just efficiency improvements but operational necessities.

Why manual abstraction cannot scale to modern oncology data volumes

COTA’s mission demonstrates the manual abstraction challenge. Their goal of bringing clarity to cancer using real-world data requires uncompromising quality of regulatory-grade data as the foundation. COTA’s abstraction ecosystem traditionally combined human abstraction, structured data processing, and data extraction from clinical notes. Real-world data curation is fundamentally a mix of techniques requiring significant human expertise.*

But manual abstraction faces fundamental scalability limits. Electronic health records are a treasure trove for oncology patient management and outcomes data, yet structured data including ICD-10 codes and medication dispenses lack context while unstructured data including physician notes and radiology and pathology reports are rich with insights but infeasible to review manually at scale.* As data volumes grow due to the inclusion of imaging studies, genomic sequencing, and expanding clinical documentation, human abstraction becomes the bottleneck preventing precision oncology from reaching full potential.

Cancer registry building demonstrates this challenge. Teams developing cancer registries face questions of relative performance between large language models and previous natural language processing methods for cancer disease response classification and metastases site identification. The goal is finding low-cost, sustainable solutions for lean, low-resource healthcare data registry teams.* Without automated extraction registry building remains prohibitively labor-intensive.

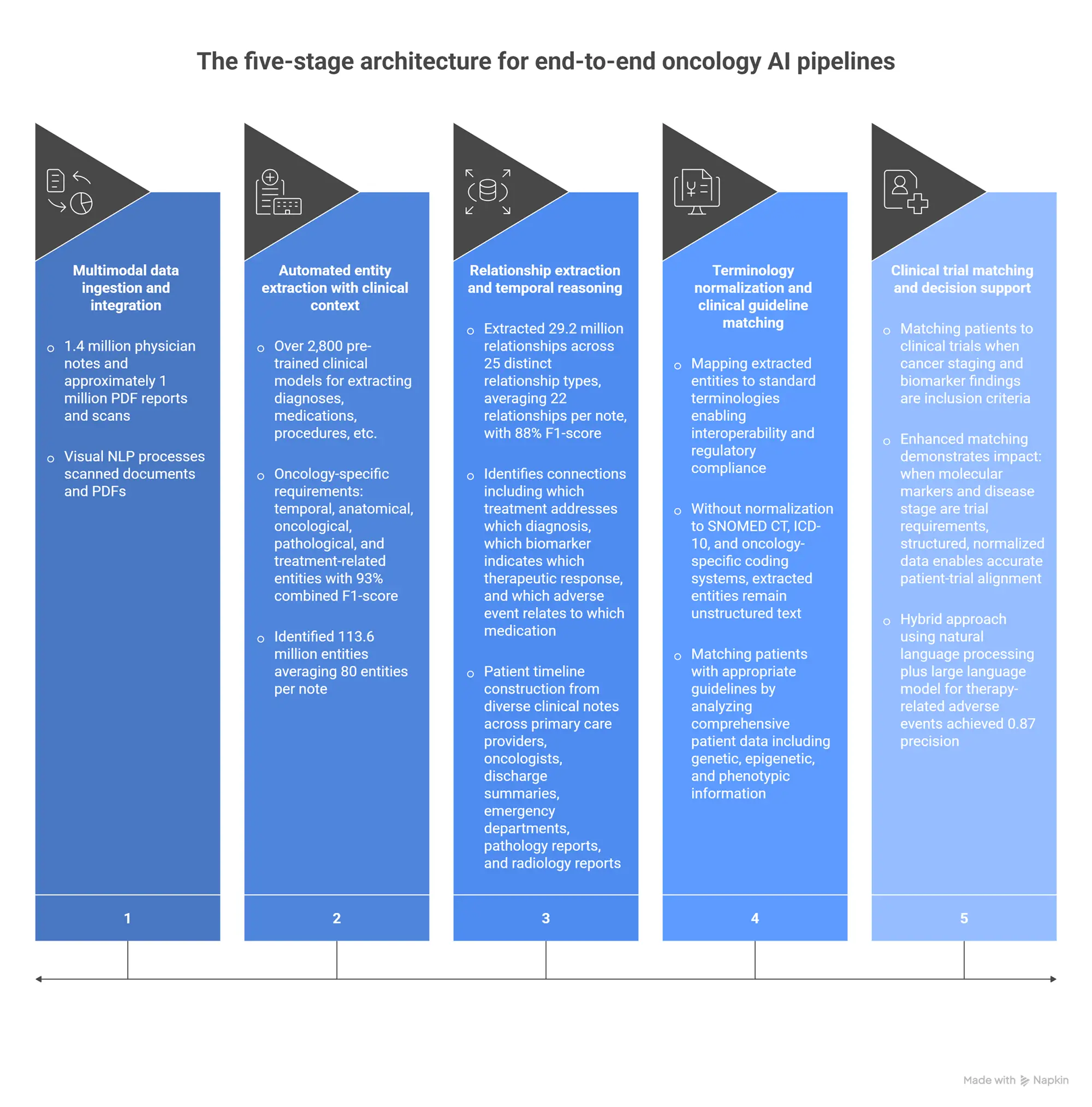

The five-stage architecture for end-to-end oncology AI pipelines

Across production implementations processing millions of oncology documents and images, a consistent architectural pattern emerges for end-to-end workflows:

Stage 1: Multimodal data ingestion and integration

MiBA’s pipeline demonstrates comprehensive data ingestion: 1.4 million physician notes and approximately 1 million PDF reports and scans including pathology reports, radiology reports, and genomic reports. Visual NLP processes scanned documents and PDFs that comprise significant portions of oncology data. This integrated ingestion addresses the fundamental fragmentation problem: oncology data lives in multiple systems using different formats, and comprehensive analysis requires consolidating all sources.

Stage 2: Automated entity extraction with clinical context

Healthcare NLP provides over 2,800 pre-trained clinical models for extracting diagnoses, medications, procedures, laboratory tests, and anatomical locations. MiBA’s implementation demonstrates oncology-specific requirements: extracting temporal, anatomical, oncological, pathological, and treatment-related entities with 93% combined F1-score for entity extraction. The system identified 113.6 million entities averaging 80 entities per note, a comprehensive capture impossible through manual abstraction.*

Roche’s oncology implementations show specialty-specific extraction patterns. Their work on relation extraction from clinical documents and deep learning for pathology reports demonstrates how specialized models handle oncology terminology, staging systems, and biomarker nomenclature that general-purpose systems cannot reliably process.*

Stage 3: Relationship extraction and temporal reasoning

MiBA’s pipeline extracted 29.2 million relationships across 25 distinct relationship types, averaging 22 relationships per note, with 88% F1-score for relationship extraction. This identifies connections including which treatment addresses which diagnosis, which biomarker indicates which therapeutic response, and which adverse event relates to which medication. These relationships are essential for clinical decision support but invisible in isolated entity lists.*

Roche’s oncology patient timeline construction demonstrates temporal reasoning requirements. Their system applies healthcare-specific large language models to construct detailed patient timelines from diverse clinical notes across primary care providers, oncologists, discharge summaries, emergency departments, pathology reports, and radiology reports. This chronological ordering enables understanding disease progression, treatment response, and outcome trajectories.*

Stage 4: Terminology normalization and clinical guideline matching

COTA’s regulatory-grade data curation uses Healthcare NLP for standardization and normalization mapping extracted entities to standard terminologies enabling interoperability and regulatory compliance. Without normalization to SNOMED CT, ICD-10, and oncology-specific coding systems, extracted entities remain unstructured text that downstream systems cannot process.*

Roche’s National Comprehensive Cancer Network guideline matching demonstrates clinical decision support requirements. Their system matches patients with appropriate guidelines by analyzing comprehensive patient data including genetic, epigenetic, and phenotypic information, then accurately aligning individual patient profiles with relevant guidelines. This enhances precision in oncology care by ensuring each patient receives tailored treatment recommendations based on the latest evidence-based standards.*

Stage 5: Clinical trial matching and decision support

MiBA’s clinical trial matching validates end-to-end pipeline value. Their system enables matching patients to clinical trials when cancer staging and biomarker findings are inclusion criteria. This precision would be impossible if clinical data remains unstructured. Enhanced matching demonstrates impact: when molecular markers and disease stage are trial requirements, structured, normalized data enables accurate patient-trial alignment.*

For therapy-related adverse events, their natural language processing plus large language model hybrid approach demonstrates multi-model architecture benefits. BioBERT alone achieved precision under 0.5 for blood clot detection. The hybrid approach that combines natural language processing plus large language model achieved 0.87 precision while parsing over 18,000 clinic notes in two hours. This shows why end-to-end pipelines combine specialized extraction models with large language model reasoning rather than relying on either approach alone.

Why specialized healthcare NLP outperforms general-purpose models for oncology workflows

The precision requirements for oncology artificial intelligence are unforgiving. Missed cancer diagnoses, incorrectly extracted biomarker results, or misidentified treatment histories create clinical risk. General-purpose large language models lack the medical knowledge, terminology precision, and reliability oncology demands.

Systematic assessment on 48 medical expert-annotated clinical documents showed Healthcare NLP achieving 96% F1-score for entity detection compared to GPT-4o’s 79%, with GPT-4o completely missing 14.6% of entities versus Healthcare NLP’s 0.9% miss rate.* For oncology clinical trial matching where missed biomarker results exclude eligible patients, a 14.6% miss rate means over one in seven qualifying patients never gets identified.

The CLEVER study’s blind physician evaluation found medical doctors preferred specialized healthcare natural language processing 45% to 92% more often than GPT-4o on factuality, clinical relevance, and conciseness across 500 novel clinical test cases.* For oncology information extraction specialized models held a 10-13% advantage across evaluation dimensions.

This superior performance was achieved using an 8-billion-parameter model demonstrating that domain-specific training enables smaller models to outperform much larger general-purpose systems.* MiBA’s 93% entity extraction and 88% relationship extraction across 1.4 million notes validates specialized architecture at production scale, a performance general-purpose models cannot reliably achieve.

Healthcare NLP reduces processing costs by over 80% compared to cloud-based large language model APIs through fixed-cost local deployment.* For organizations processing millions of oncology documents, per-request API pricing is economically infeasible. MiBA parsing over 18,000 clinic notes in two hours demonstrates the throughput production systems require.

Production implementations demonstrating end-to-end oncology pipelines

MiBA comprehensive oncology data extraction: Processing 1.4 million physician notes and approximately 1 million PDF reports and scans, their pipeline achieved 93% F1-score for entity extraction and 88% for relationship extraction, identified 113.6 million entities and 29.2 million relationships, enabled clinical trial matching based on structured staging and biomarker data, and achieved 0.87 precision for adverse event detection using natural language processing plus large language model hybrid architecture. This demonstrates complete workflow from raw documents through clinical decision support.*

Roche oncology patient timelines and guideline matching: Their implementation constructs detailed patient timelines from diverse sources including primary care, oncology, pathology, and radiology documentation using healthcare-specific large language models. The system matches patients with National Comprehensive Cancer Network guidelines by analyzing genetic, epigenetic, and phenotypic data to ensure tailored treatment recommendations. This shows end-to-end workflow from timeline construction through evidence-based decision support.*

COTA regulatory-grade data curation: Their mission requires uncompromising quality for regulatory submissions and real-world evidence. The abstraction ecosystem combines automated natural language processing using Healthcare NLP for standardization and normalization with human expertise for validation. This example demonstrates how end-to-end automation augments rather than replaces human judgment for regulatory-grade applications.*

Cancer registry building with lean teams: The work on large language models facilitating cancer data registry building addresses resource-constrained teams seeking low-cost, sustainable solutions. Evaluating relative performance of models for cancer disease response classification and metastases site identification demonstrates how automated extraction makes registry building feasible for organizations lacking large manual abstraction teams.*

Therapy Specific Outcomes Coalition rheumatology extraction: While focused on rheumatology rather than oncology, their collaboration demonstrates specialty-specific extraction patterns applicable across therapeutic areas. Using John Snow Labs pretrained models to extract patient-reported pain scores, pain assessment results, and joint pain locations from clinical notes to determine therapy efficacy over time shows how automated extraction enables outcomes tracking impossible through manual abstraction.*

The operational transformation: from manual abstraction to automated pipelines

The shift from manual abstraction to end-to-end artificial intelligence pipelines fundamentally changes oncology workflows:

Labor allocation transformation: Data abstraction shifts from primary task for trained abstractionists to validation and quality assurance role. MiBA processing 1.4 million notes with automated extraction demonstrates scale impossible through manual abstraction. Human experts focus on validating automated output, handling edge cases, and ensuring clinical accuracy rather than performing initial extraction.

Throughput and latency improvement: What traditionally required weeks or months of manual abstraction now processes in hours or days. MiBA parsing over 18,000 clinic notes in two hours demonstrates throughput enabling near-real-time clinical decision support. This accelerates diagnosis, treatment planning, clinical trial enrollment, and research.

Consistency and standardization: Automated pipelines impose consistent entity recognition, terminology mapping, and relationship extraction across all documents. This reduces variability from individual abstractor interpretation, institutional documentation differences, and temporal drift in coding practices.

Multimodal integration capability: End-to-end pipelines combine imaging, genomics, clinical notes, and laboratory data that manual workflows struggle to integrate. Roche’s timeline construction from diverse sources and MiBA’s comprehensive extraction from notes plus PDF reports demonstrate integration capabilities enabling holistic patient understanding.

Scalability to population-level analysis: Automated extraction enables population health analytics, real-world evidence generation, and registry building at scale. Cancer registry work demonstrates how automation makes comprehensive data capture feasible for resource-constrained organizations.

Implementation requirements and governance considerations

Several operational factors determine end-to-end pipeline success:

Data infrastructure and interoperability: Ohio State University’s research infrastructure processing 200+ million notes demonstrates requirements: unified data ingestion consolidating electronic health records, imaging systems, laboratory databases, and genomic platforms; portable configuration supporting both cloud and on-premise deployment; and consistent logging for monitoring and compliance.*

Human-in-the-loop validation for regulatory applications: COTA’s regulatory-grade approach combines automated extraction with human expertise specifically because submissions to regulatory agencies require validation. Organizations should identify which extraction decisions require human review based on clinical impact, regulatory requirements, or error consequences.

Privacy and de-identification for multi-institutional collaboration: Dandelion Health’s de-identification demonstrates governance requirements when consolidating data from multiple sources. Breaking down data types by risk level, applying pre-processing enhancements, and maintaining full provenance ensures privacy while preserving utility.*

Continuous model updating for evolving oncology knowledge: Cancer treatment guidelines, biomarker definitions, and staging systems evolve rapidly. Organizations must establish processes for updating extraction models, terminology mappings, and decision support logic as clinical knowledge advances. It means ongoing maintenance rather than one-time implementation.

Validation across diverse patient populations: Models trained on one institution’s data may not generalize to different demographics, documentation patterns, or treatment approaches. Organizations should validate performance across diverse populations to ensure equitable accuracy and avoid systematic bias against underrepresented groups.

Looking forward: democratizing precision oncology through automated abstraction

The implementations across MiBA, Roche, COTA, and cancer registry building demonstrate that end-to-end oncology artificial intelligence pipelines have moved from research concepts to production systems. MiBA’s 93% entity extraction across 1.4 million notes, Roche’s patient timeline construction and guideline matching, and COTA’s regulatory-grade data curation establish that automated abstraction achieves the accuracy and scale contemporary oncology requires.

Healthcare organizations continuing to rely on manual abstraction face growing disadvantages: inability to process data volumes contemporary oncology generates, limited clinical trial enrollment from incomplete patient characterization, delayed treatment decisions from abstraction backlogs, and inability to participate in multi-institutional research requiring standardized data.

Those implementing end-to-end automated pipelines gain competitive advantages through comprehensive patient characterization enabling precision treatment selection, rapid clinical trial matching expanding patient options, real-world evidence generation supporting research and quality improvement, and scalable operations supporting growth without proportional staffing increases.

Organizations can explore end-to-end oncology capabilities through Healthcare NLP demonstrations showing entity extraction, relationship identification, and terminology normalization. The customer implementations including MiBA, Roche, and COTA demonstrate production architectures. Technical documentation provides implementation patterns for multimodal oncology data processing. The Patient Journey Intelligence solution addresses longitudinal timeline reconstruction essential for oncology care.

FAQs

How accurate is automated oncology data extraction compared to manual abstraction?

MiBA’s production validation demonstrates automated extraction achieving 93% F1-score for entity extraction and 88% for relationship extraction across 1.4 million physician notes and approximately 1 million PDF reports. Systematic benchmarking shows Healthcare NLP achieving 96% F1-score for clinical entity detection compared to GPT-4o’s 79%, with Healthcare NLP missing only 0.9% of entities versus GPT-4o missing 14.6%. The CLEVER study found medical doctors preferred specialized healthcare natural language processing 45-92% more often than GPT-4o on clinical tasks. However, accuracy depends on documentation quality, terminology consistency, and model training. COTA’s regulatory-grade approach combines automated extraction with human validation specifically because regulatory submissions require expert review. Organizations should validate automated extraction against expert abstractor review on sample datasets, measuring agreement rates and identifying systematic error patterns requiring model refinement or human-in-the-loop validation.

Can automated pipelines handle multimodal oncology data including imaging, genomics, and clinical notes?

Yes, through integrated architectures combining specialized models for each modality. MiBA’s implementation processes 1.4 million physician notes plus approximately 1 million PDF reports and scans including pathology and radiology documents using Healthcare NLP for text and Visual NLP for scanned documents and images. Roche’s patient timeline construction extracts from diverse sources including primary care notes, oncologist documentation, pathology reports, and radiology reports. The critical requirement is entity resolution and terminology normalization that maps extracted concepts across modalities to standard codes, ensuring “HER2 positive” extracted from pathology reports, genomic sequencing results, and clinical notes all map to the same standardized representation. Organizations implementing multimodal pipelines should establish controlled vocabularies, validate cross-modality entity resolution accuracy, and implement human review for ambiguous cases where different modalities provide conflicting information.

How do end-to-end pipelines enable clinical trial matching?

By extracting and structuring eligibility criteria data that manual processes cannot capture comprehensively at scale. MiBA’s implementation demonstrates this: when cancer staging and biomarker findings are trial inclusion criteria, automated extraction and normalization enable precise patient-trial matching. Their enhanced matching shows impact when molecular markers and disease stage are requirements—structured data enables accurate alignment impossible with unstructured documentation. Roche’s National Comprehensive Cancer Network guideline matching demonstrates similar capability: analyzing genetic, epigenetic, and phenotypic data to match patients with appropriate treatment protocols. The workflow includes: (1) extracting diagnosis, staging, biomarker, and treatment history from clinical notes and reports, (2) normalizing to standard terminologies matching trial eligibility specifications, (3) identifying temporal relationships ensuring current versus historical status, (4) matching structured patient profiles against trial criteria databases, and (5) ranking trial options by eligibility fit and clinical appropriateness. Organizations implementing trial matching should validate that extraction captures all eligibility-relevant entities, terminology mapping aligns with trial specification vocabularies, and matching logic handles complex multi-criteria eligibility rules.

What happens to data abstractionist roles when automation handles extraction?

Roles transform from primary extraction to validation, quality assurance, and complex case handling. COTA’s regulatory-grade approach demonstrates this evolution: their abstraction ecosystem combines automated natural language processing with human expertise, maintaining uncompromising quality through expert validation rather than manual extraction. Organizations implementing automated pipelines report that abstractionists focus on: (1) validating automated extraction output against clinical documentation, (2) handling edge cases where automated models have low confidence or conflicting information, (3) maintaining terminology standards and extraction rules as clinical knowledge evolves, (4) training and fine-tuning models on institutional documentation patterns, and (5) ensuring regulatory compliance for submissions requiring expert review. This represents labor reallocation rather than elimination. Abstractionists shift from routine data entry to quality oversight and expert judgment. Organizations should plan for abstractor retraining in model validation, error analysis, and quality assurance rather than assuming automated systems eliminate abstraction roles entirely.

How long does it take to implement end-to-end oncology AI pipelines?

Implementation timelines depend on existing data infrastructure, documentation standardization, and integration complexity. Organizations using modern data platforms like Databricks can deploy Healthcare NLP pipelines in weeks for standard extraction use cases. MiBA’s processing of 1.4 million notes and 1 million PDF reports demonstrates production-scale deployment, though achieving 93% entity extraction and 88% relationship extraction required model training and validation. Organizations should plan for: (1) data infrastructure setup consolidating electronic health records, pathology systems, radiology archives, and genomic platforms (weeks to months depending on existing interoperability), (2) extraction model deployment and institutional fine-tuning (weeks), (3) terminology normalization and entity resolution configuration (weeks), (4) clinical trial matching or decision support logic implementation (weeks to months), (5) human-in-the-loop validation workflow integration (weeks), and (6) accuracy validation against expert review (ongoing). Total timeline from planning to production typically ranges from 3-6 months for organizations with modern infrastructure to 6-12 months for those requiring significant legacy system integration. However, phased deployment enables incremental value. Most organizations can start with entity extraction from notes, then add PDF processing, then add clinical trial matching rather than requiring complete pipeline before any benefit.

How do organizations ensure automated extraction meets regulatory requirements for real-world evidence?

Through systematic validation, human oversight, and comprehensive audit trails. COTA’s mission demonstrates requirements: bringing clarity to cancer using real-world data demands uncompromising quality of regulatory-grade data as foundation. Their approach combines automated extraction with human expertise specifically for regulatory applications. Organizations should implement: (1) validation against expert abstractor review on statistically representative samples, measuring agreement rates and documenting error patterns, (2) human-in-the-loop review for high-impact extractions affecting regulatory submissions, (3) comprehensive audit trails documenting which documents were processed, which models were used, what entities were extracted, and when human review occurred, (4) provenance tracking linking every extracted data point to source documentation, (5) confidence scoring flagging low-confidence extractions for mandatory human review, and (6) ongoing performance monitoring detecting accuracy drift requiring model retraining. Dandelion Health’s de-identification approach shows validation patterns: maintaining full provenance, demonstrating methodology for regulatory review, and documenting trade-offs between recall and precision. Organizations submitting real-world evidence should engage with regulatory agencies early to understand specific data quality documentation requirements and validation expectations.

What is the ROI of implementing automated oncology data extraction?

ROI varies by use case but documented benefits include: MiBA’s comprehensive extraction enabling clinical trial matching that manual processes cannot achieve at scale, faster treatment decisions from automated abstraction eliminating weeks of manual data preparation, increased clinical trial enrollment from systematic patient-trial matching identifying eligible candidates, improved real-world evidence generation from structured longitudinal data, and reduced abstraction labor costs enabling reallocation to higher-value activities. For organizations with large oncology programs, even modest improvements in trial enrollment generate substantial returns through per-patient trial revenue and improved outcomes from expanded treatment options. Cancer registry building work demonstrates value for resource-constrained organizations: automated extraction makes registry maintenance feasible for lean teams that cannot afford large manual abstraction staffs. Organizations should model ROI based on: current abstraction labor costs, clinical trial enrollment potential and associated revenue, real-world evidence research value, treatment decision acceleration benefits, and operational scalability enabling patient volume growth without proportional staffing increases. Most organizations processing tens of thousands of oncology encounters annually achieve positive ROI within 12-18 months.

What solutions does John Snow Labs offer for oncology workflow automation?

John Snow Labs provides comprehensive infrastructure for end-to-end oncology pipelines. Healthcare NLP includes over 2,800 pre-trained clinical models for entity extraction, relation extraction capturing connections between diagnoses, treatments, biomarkers, and outcomes, assertion detection identifying clinical context, and entity resolution mapping to SNOMED CT, ICD-10, and oncology-specific terminologies. Visual NLP processes scanned pathology reports, radiology documents, and PDF records that MiBA’s implementation shows comprise significant portions of oncology data. Medical LLM provides specialized large language models for clinical reasoning, timeline construction, and guideline matching that Roche’s implementations demonstrate. Patient Journey Intelligence enables longitudinal timeline reconstruction essential for understanding disease progression and treatment response. Generative AI Lab provides human-in-the-loop validation workflows that COTA uses for regulatory-grade quality assurance. These integrate with Databricks, AWS, Azure, and on-premise environments supporting the scalable deployment MiBA demonstrates processing 1.4 million notes. Organizations can explore live demonstrations, review customer implementations including MiBA, Roche, and COTA, or access technical documentation for oncology pipeline architecture patterns.