Every day, healthcare organizations face an impossible balancing act. Clinical teams need AI tools to extract insights from unstructured medical records, validate de-identification results, and accelerate annotation workflows. But every interaction with patient data creates compliance risk.

Consider the reality of modern healthcare AI workflows:

Annotation teams review thousands of clinical notes, validating AI-extracted entities like medications, diagnoses, and procedures. Each review involves accessing Protected Health Information (PHI). Who viewed which patient records? When? For how long? Without answers, every HIPAA audit becomes a scramble through server logs.

De-identification specialists prepare datasets for AI training, removing or masking sensitive information. But how do you prove that de-identified data was handled correctly? That the right team members accessed the right records? That no unauthorized exports occurred during the process?

Compliance officers know that “we think we’re compliant” isn’t good enough. Regulators expect detailed audit trails: what was accessed, who modified it, what was exported, and where it went. Manual log reviews can take weeks and still miss critical events.

Security teams face a constant threat: well-meaning employees exporting sensitive data to uncontrolled locations. A single CSV file downloaded to a laptop can represent hundreds of patient records outside secure systems.

The problem isn’t just logging activity—most platforms capture logs. The challenge is turning those logs into answers when they matter most: during audits, investigations, or when suspicious patterns emerge.

Generative AI Lab – A platform built for Healthcare AI governance

Generative AI Lab addresses these challenges through a comprehensive approach to AI governance that goes beyond basic logging. It’s designed for the reality of healthcare workflows where Human-in-the-Loop annotation, validation, de-identification, and AI training must happen securely, compliantly, and transparently.

As generative AI becomes crucial to healthcare workflows, organizations need more than strong models – they need platforms they can trust, manage, and explain. In regulated environments, visibility is not just a nice feature – it’s essential for compliance, security, and accountability.

That’s why Generative AI Lab 7.8 introduces the Audit Logs Dashboard, a HIPAA-compliant, system-wide auditing solution that provides real-time, detailed visibility into all platform activities.

The Audit Logs Dashboard: From raw logs to actionable insights

Many AI platforms capture activity logs. Few make those logs actionable when compliance teams need answers immediately. The Audit Logs Dashboard provided by Generative AI Lab transforms low-level events into clear, visual insights that address the specific questions healthcare organizations face:

Real-time activity visibility

Every interaction with the platform is logged with granular detail. When annotation teams validate AI predictions on clinical notes, the system records who accessed which projects, when they made changes, and what those changes were. When de-identification workflows run, every step is tracked—from the initial data import to the final validation by clinical experts.

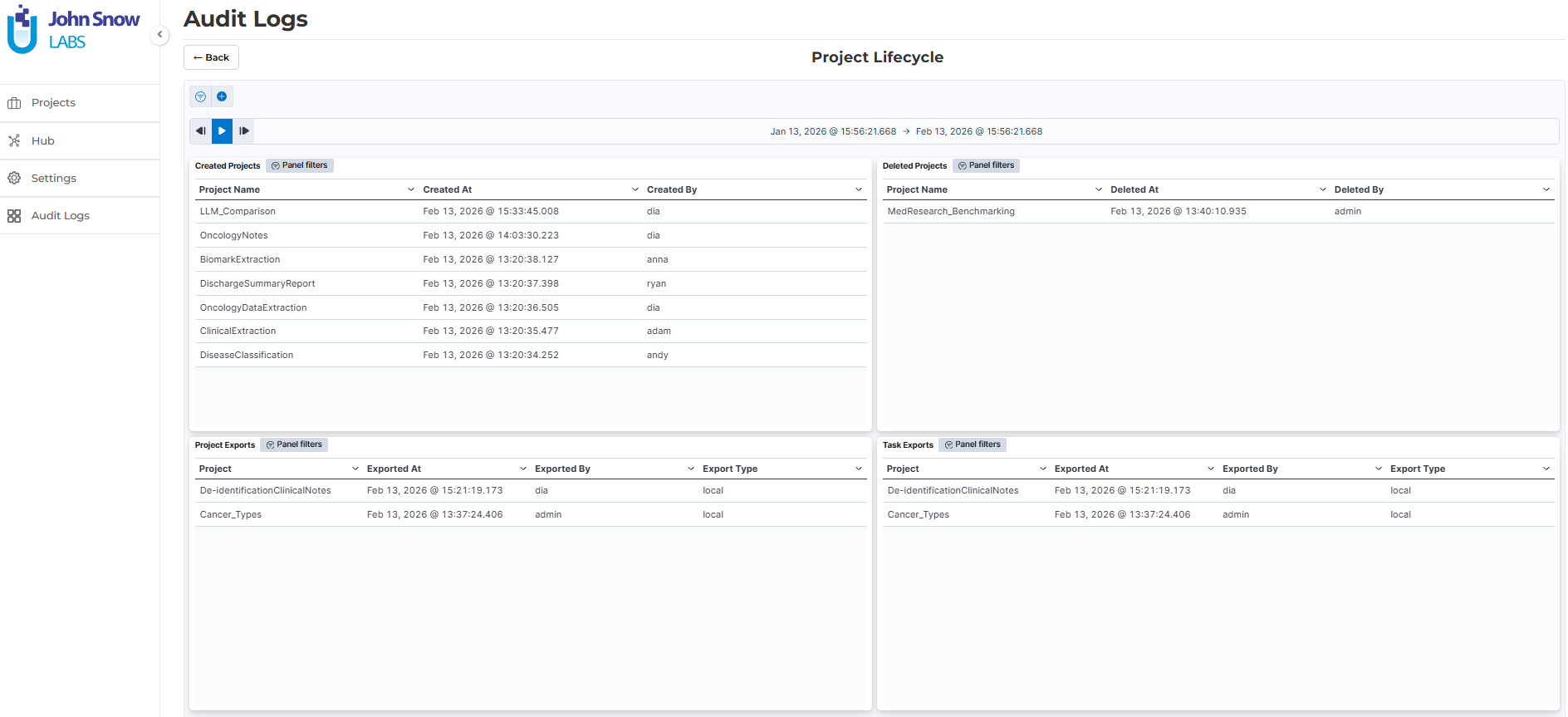

The dashboard tracks the complete project lifecycle: creation, updates, configuration changes, and deletion. For annotation projects processing PHI, this means complete traceability of who did what, when, and in what context.

Data movement tracking

For healthcare organizations, knowing when and how data leaves the platform is critical. The dashboard monitors all exports—both to local workstations and cloud storage—with detailed logging of what was exported, by whom, and to where.

When preparing datasets for AI training, teams can demonstrate that only authorized personnel exported de-identified data to approved cloud locations. When running HITL validation workflows, supervisors can review which annotators accessed which patient records and verify that no unauthorized exports occurred.

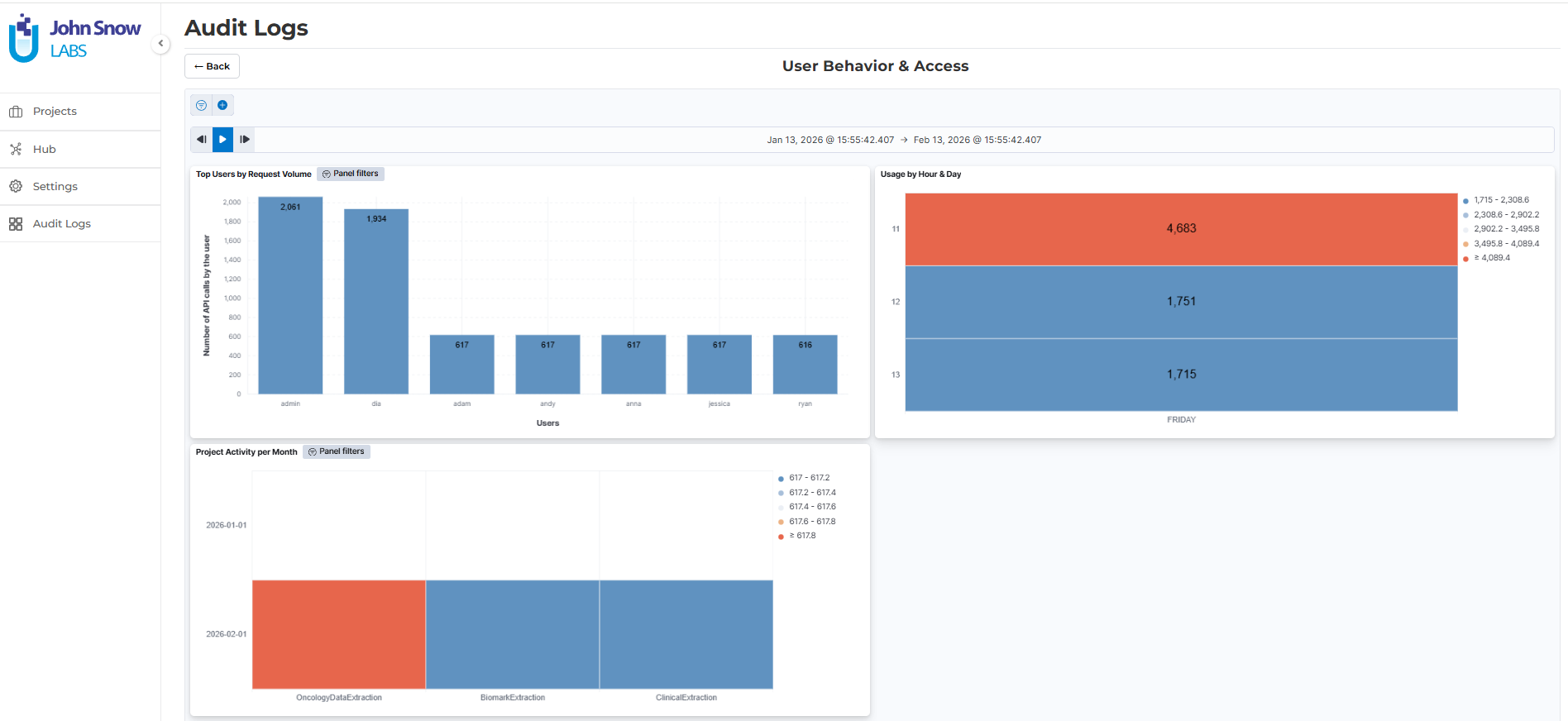

Visual intelligence for pattern detection

Instead of scrolling through text logs, teams see activity patterns through intuitive visualizations:

- Project activity heatmaps reveal unusual access patterns across annotation projects

- Export monitoring dashboards highlight data egress events that require investigation

- User activity tables break down individual actions, making it easy to investigate specific team members or time periods

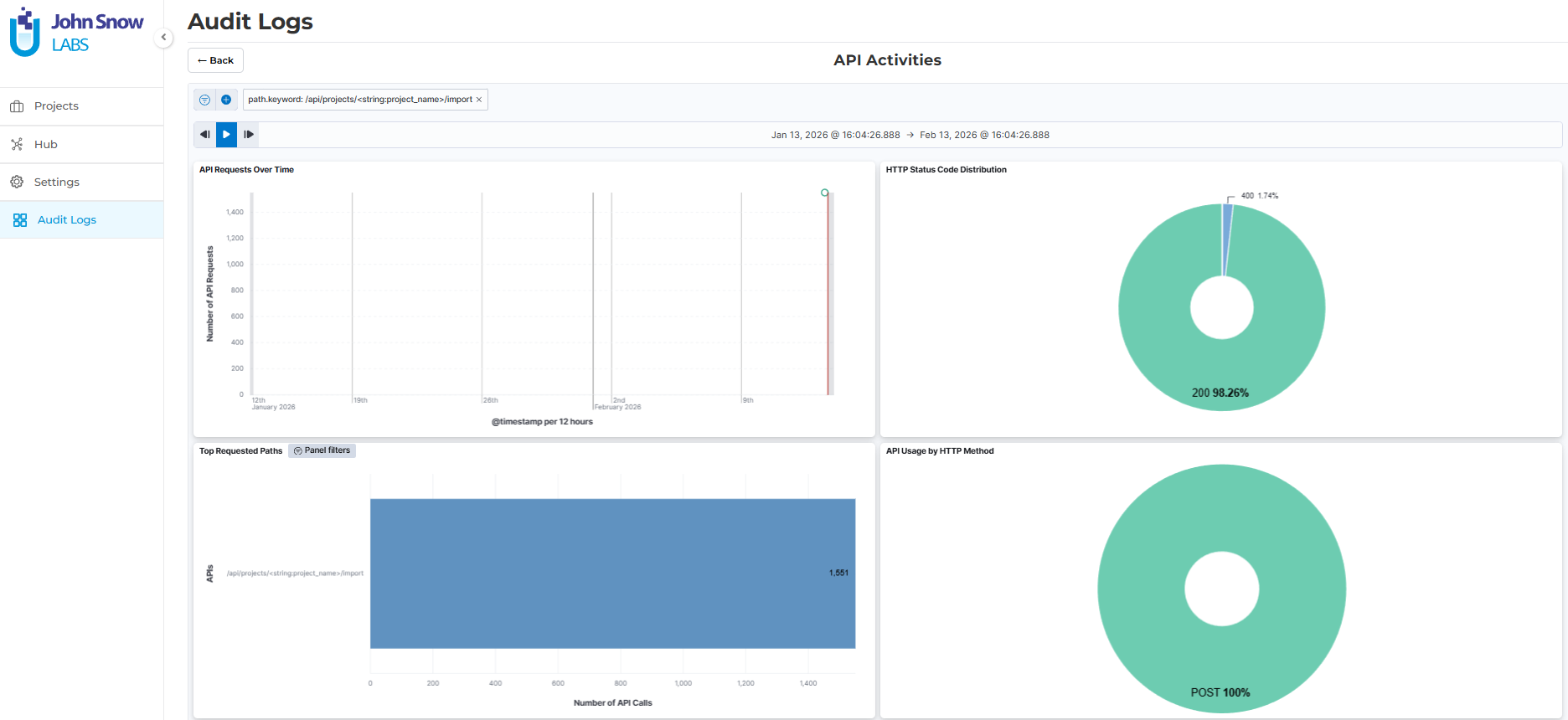

- API usage visualizations help identify anomalous patterns that might indicate security issues

- HTTP status distributions reveal system health and potential access control problems

These visuals aren’t just prettier logs—they enable compliance teams to spot issues that would be invisible in text files. When an annotator suddenly exports significantly more data than usual, or when access patterns change around a specific de-identification project, the dashboard surfaces these signals before they become incidents.

Flexible investigation capabilities

When questions arise (e.g. during audits, investigations, or routine reviews) teams need to drill down quickly. Generative AI lab allows the following filtering capabilities:

- Filter by project to review all activity within specific projects (e.g. annotation, LLM Evaluation, LLM comparison, de-identification, etc)

- Filter by user to investigate individual team member actions during HITL workflows

- Filter by event type to focus on exports, configuration changes, or PHI access

- Filter by date range to examine activity during specific periods

- Create custom dashboards tailored to different oversight needs

These capabilities transform investigations from multi-day log reviews into focused, efficient queries. When a compliance officer asks “who accessed de-identification project X last month?” the answer takes minutes, not days.

Privacy-first architecture of Generative AI Lab

The Audit Logs Dashboard is built for healthcare from the ground up. It maintains comprehensive accountability while protecting sensitive information:

- Metadata Logged Without Exposure. The system logs user IDs, API methods, timestamps, and event context—but never sensitive payloads. Passwords, PHI, and PII remain protected while the audit trail remains complete. When reviewing logs of annotation activity on clinical notes, compliance teams see who accessed which projects and when, without exposing the actual patient data being annotated.

- Configurable Retention. Healthcare organizations have varying requirements for log retention based on regulatory frameworks and internal policies. The dashboard supports user-configurable data retention periods, enabling organizations to maintain audit trails as long as needed for investigations and compliance requirements.

- HIPAA-Compliant by Design. The entire auditing infrastructure is built to support HIPAA compliance. The system tracks access to Protected Health Information, modifications to sensitive data, and exports that move data outside the platform—all with the granularity regulators expect during audits.

Controlling data movement: prevention and detection

Audit logging reveals what happened, but healthcare organizations also need to prevent problems before they occur. Generative AI Lab provides comprehensive controls over how data enters and leaves the platform.

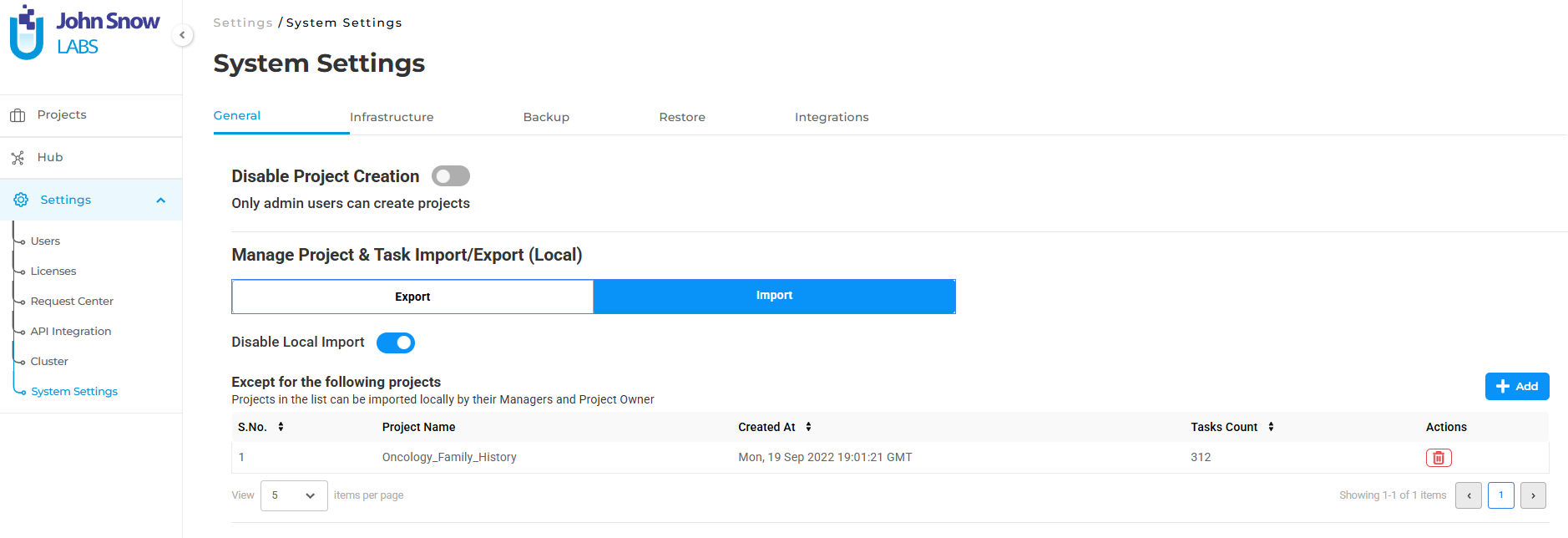

Restricting local imports

Many compliance incidents start with uncontrolled data ingress. An annotator imports a CSV of patient records from their laptop. A researcher uploads files from an unsecured location. Each represents risk.

Administrators can globally disable local file imports, ensuring data only flows through auditable, encrypted cloud channels like Amazon S3 or Azure Blob Storage. This means annotation projects can only pull data from approved, monitored sources—eliminating the risk of uncontrolled PHI entering the platform.

The system provides flexibility through project-level exceptions, allowing administrators to maintain operational flexibility for specific use cases (like internal development projects using synthetic data) while enforcing strict controls for PHI processing workflows.

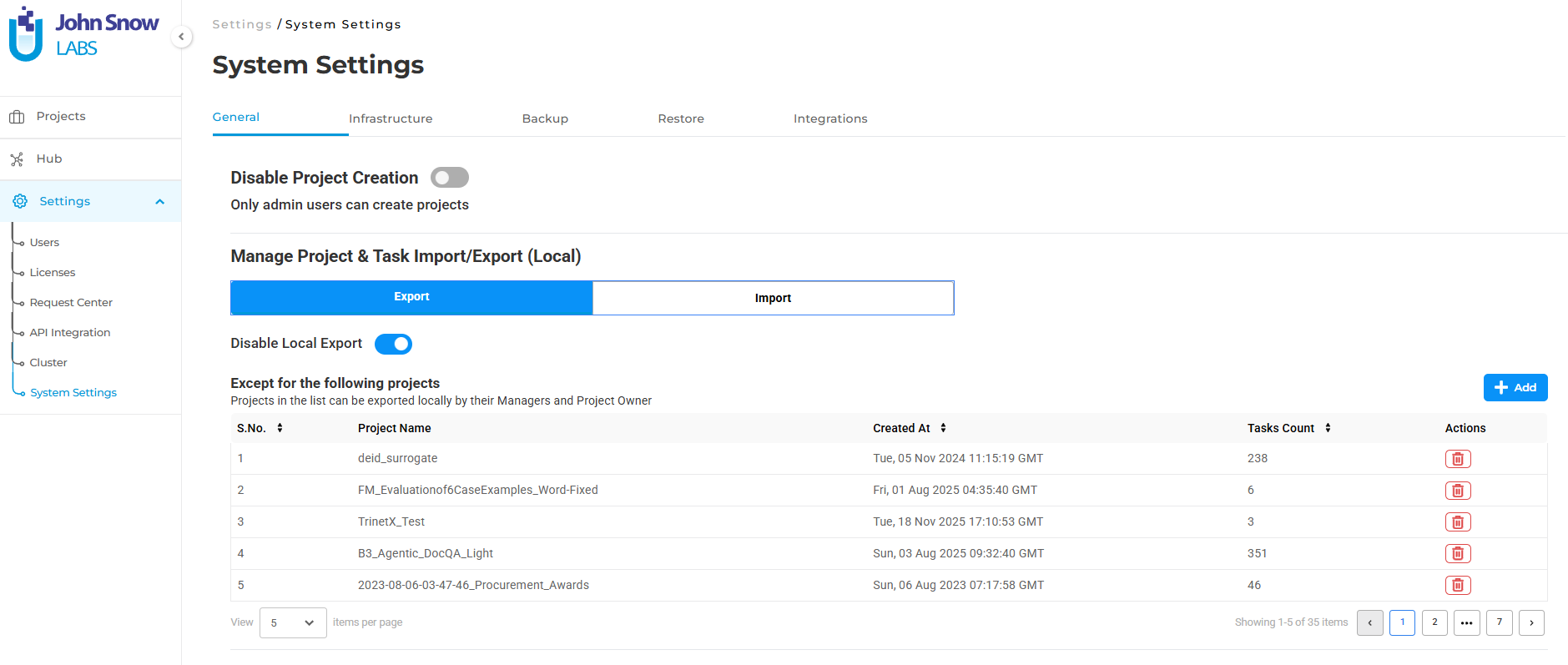

Restricting local exports

Local exports represent one of the highest risks in AI workflows. A single “download CSV” action can move thousands of patient records outside secure infrastructure onto uncontrolled devices.

Administrators can disable local exports entirely, forcing all data to be exported only to approved cloud storage locations. When preparing training datasets from annotated clinical notes, teams must export to S3 buckets rather than local workstations—ensuring data remains within secure, auditable infrastructure.

Like import restrictions, export controls support project-level exceptions, giving administrators granular control over which projects require cloud-only workflows and which can operate with more flexibility.

Administrative control of resources

Uncontrolled project creation can lead to unauthorized processing of PHI or unmonitored annotation workflows. In Generative AI Lab it is possible to restrict project creation rights to Admins users only, ensuring all annotation projects, de-identification workflows, and AI training initiatives go through proper approval before being launched.

This prevents scenarios where well-meaning team members spin up annotation projects on sensitive data without proper security review or compliance approval.

Real-world benefits across healthcare workflows

These capabilities deliver concrete value across the entire healthcare AI lifecycle:

For HITL validation workflows

When clinical experts validate AI-extracted entities, assertions, or relationships from medical records, every interaction is logged. Supervisors can review who validated which predictions, when corrections were made, and whether validation patterns suggest training data issues or model problems.

If an AI model incorrectly extracts medication dosages from clinical notes, the audit trail shows which annotators caught the errors, enabling quality analysis and targeted retraining.

For de-identification projects

De-identification is a high-stakes process. Teams need to prove that PHI was properly masked or removed, that only authorized personnel accessed sensitive data, and that final datasets were exported only to approved locations.

The Audit Logs Dashboard provides the complete chain of custody: who created the de-identification project, which models were used, who validated results, and where the final de-identified dataset was exported. Combined with restricted exports, this ensures de-identified training data never leaves secure cloud infrastructure.

For compliance readiness

When auditors ask for evidence of HIPAA compliance, healthcare organizations need more than assurances—they need documentation. The dashboard provides:

- Comprehensive records of who accessed PHI within annotation projects

- Documentation of data modifications and when they occurred

- Evidence that exports only went to approved, secure locations

- Proof that access controls and restrictions were enforced consistently

This turns compliance audits from stressful unknowns into straightforward evidence reviews.

For security investigations

When suspicious activity occurs such as unusual access patterns, unexpected exports, or anomalous API usage, security teams need to investigate quickly. The visual dashboards surface these patterns before they escalate, and the flexible filtering enables rapid investigation of specific events, users, or timeframes.

If an annotator’s account shows unusual export activity, security teams can immediately determine what was exported, when, and whether it represents a genuine security incident or a legitimate workflow change.

Implementation: Built for healthcare IT

Healthcare IT teams need solutions that integrate cleanly into existing infrastructure without creating new security or operational burdens.

Simple Activation. Audit logging can be enabled during installation or upgrade with simple installer flags. The system automatically provisions necessary backend services, including Elastic Search if not already present.

Flexible Deployment. Organizations can deploy Elastic Search internally for complete data ownership or connect to existing external Elastic Search clusters to unify logging infrastructure across the organization. This flexibility ensures the audit logging solution fits into existing IT architectures rather than forcing new patterns.

Privacy and Performance. The logging infrastructure captures comprehensive activity data without impacting platform performance or exposing sensitive information. Healthcare teams can work at full speed while maintaining complete audit trails.

Why this matters now

As healthcare AI adoption accelerates, the gap between innovation and governance grows. Organizations that move fast without visibility create compliance debt that compounds over time. The first HIPAA violation or security incident can erase years of AI progress.

The organizations that will lead healthcare AI aren’t just those with the best models, they’re the ones that can deploy AI responsibly, demonstrate compliance clearly, and respond to incidents quickly.

Generative AI Lab’s comprehensive approach to AI governance, combining real-time audit logging, visual analytics, and preventive controls, represents a shift from AI platforms that happen to have some compliance features to platforms built from the ground up for healthcare’s unique requirements.

When annotation teams validate AI predictions, when de-identification workflows prepare training data, when clinical experts review model outputs, every action happens within a framework of accountability, transparency, and control.

That’s not just compliance. That’s how healthcare organizations earn the trust needed to transform patient care with AI.

Next steps

Ready to deploy healthcare AI workflows with complete visibility and control?

Deploy in One Click Generative AI Lab is available on AWS Marketplace and Azure Marketplace. Subscribe and launch your HIPAA-compliant AI platform in minutes with all audit logging and governance features enabled out of the box.

On-Premise Deployment Need complete control over your infrastructure? Our enterprise team will work with you to deploy Generative AI Lab in your own environment with full support for your security requirements and compliance framework.

Contact Sales for On-Premise Options →

Questions about HIPAA compliance, audit logging, or deployment options? Our healthcare AI specialists are here to help you build AI workflows you can trust.