How to detect signature in image-based documents

For document comprehension pipelines in the healthcare and the financial area, we need some time to detect the signature of the document or classify documents. In most cases, it is image-based scanned documents.

We implemented Signature Detection in Spark OCR as ImageSignatureDetector transformer.

It based on Cascade Region-based CNN networks:

The architectures of CASCADE-R-CNN. “I” is input image, “conv” backbone convolutions, “pool” region-wise feature extraction, “H” network head, “B” bounding box, “C” classification and “B0” is proposals.

Let’s define ImageSignatureDetector and load pre-trained model.

signature_detector = ImageSignatureDetector() \

.pretrained("image_signature_detector_gsa0628", "en", "public/ocr/models") \

.setInputCol("image") \

.setOutputCol("signature_regions") \

.setScoreThreshold(0.6)

Note: Full code of the example in Jupyter notebook you can found here. In order to run the code, you will need a Spark OCR license, for which a 30-day free trial is available here.

For draw bounding boxes to the original image we can use ImageDrawRegion transformer:

draw_regions = ImageDrawRegions() \

.setInputCol("image") \

.setInputRegionsCol("signature_regions") \

.setOutputCol("image_with_regions")

We can assemble the Spark ML pipeline and call it now:

pipeline = PipelineModel(stages=[

binary_to_image,

signature_detector,

draw_regions

])

result = pipeline.transform(image_df).cache()

display_images(result, "image_with_regions")

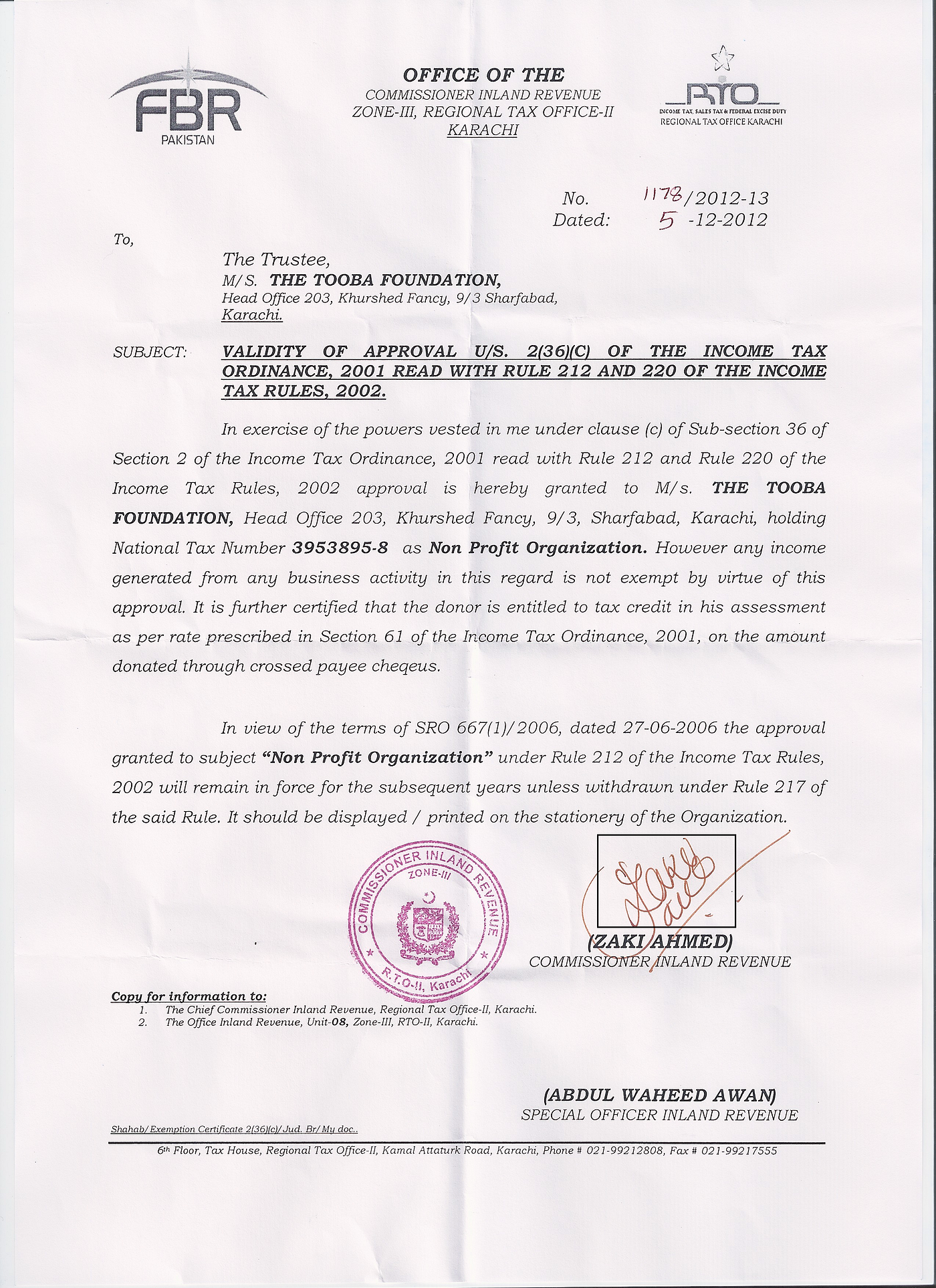

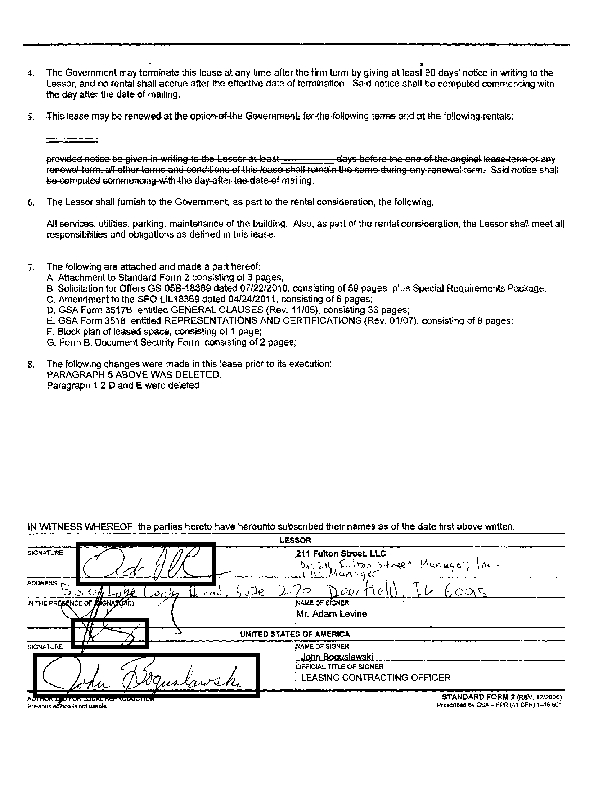

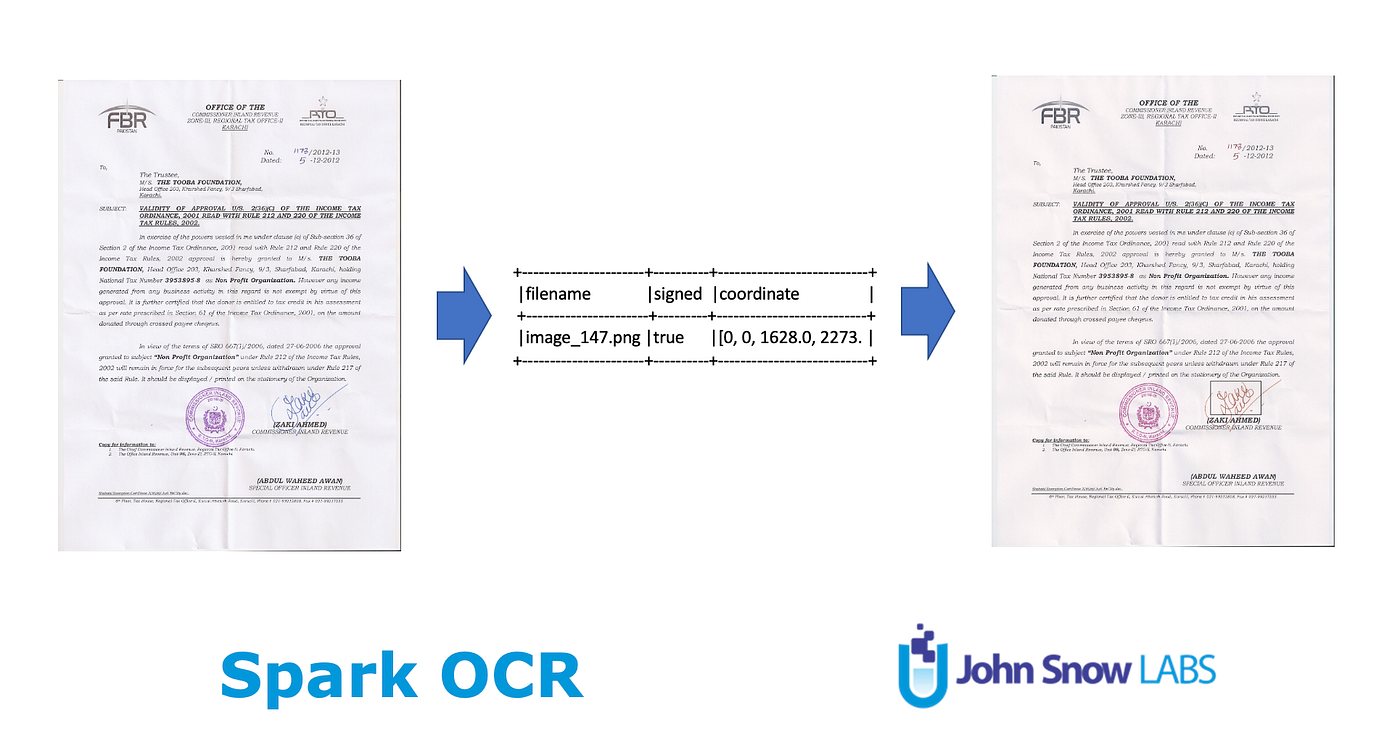

Output image:

‘signature_regions’ has the following schema:

result.select("signature_regions").printSchema()root

|-- signature_regions: array (nullable = true)

| |-- element: struct (containsNull = true)

| | |-- index: integer (nullable = false)

| | |-- page: integer (nullable = se)

| | |-- x: float (nullable = false)

| | |-- y: float (nullable = false)

| | |-- width: float (nullable = false)

| | |-- height: float (nullable = false)

| | |-- score: float (nullable = false)

| | |-- label: integer (nullable = false)

Excep<spant< span=””> for coordinates, it includes also a score. The score is a probability for detected signature. We can filter results by setting the threshold using setScoreThreshold method of ImageSignatureDetector transformer.</spant<>

This approach is useful for document classification too. Let’s add the `signed` field which checks the count of detected regions for classify images (signed/unsigned):

result.withColumn("coordinate", f.explode_outer(f.col("signature_regions"))) \

.withColumn("filename", f.element_at(f.split("path", "/"), -1))\

.withColumn('signed', f.size(f.col("signature_regions")) > 0) \

.select("filename", "signed", "coordinate") \

.show(truncate=False)

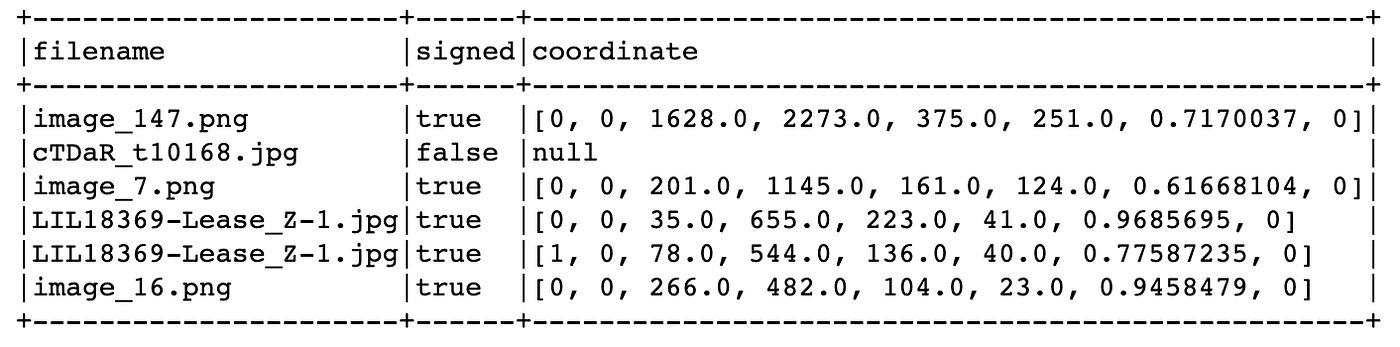

Results can contain one or more signatures. For example:

We can apply Signature Detection also to the PDF documents using PdfToImage transformer:

pdf_to_image = PdfToImage() \

.setInputCol("content") \

.setOutputCol("image")

So we have an end-to-end scalable solution based on Spark NLP OCR for Signature Detection and Classification.

Links

- Notebook with full example

- Cascade R-CNN: Delving into High Quality Object Detection

- More examples you can found in Spark OCR Workshop

- Spark OCR Documentation

- PDF OCR