What is the current state of the mental health crisis?

Globally, mental health disorders affect over 970 million people, according to the Global Burden of Disease Study. In the U.S., one in five adults lives with a mental illness, with rising rates of depression, anxiety, and suicide. One in 20 U.S. adults experiences serious mental illness each year, and half of all lifetime mental illnesses begin by age 14. Compounding the crisis is a critical shortage in mental health professionals, averaging 350 patients per provider. These challenges demand scalable, accessible solutions.

How does Conversational AI offer new solutions in mental health?

Conversational AI, powered by Natural Language Processing (NLP), bridges accessibility gaps by offering 24/7 support. These systems engage users in dialogue, detect mental health signals, and guide them through therapeutic or triage processes. John Snow Labs’ Healthcare NLP powers such applications with over 2,000 pre-trained models tailored for clinical understanding. This infrastructure ensures that mental health solutions built on AI are both reliable and clinically valid, supporting diverse deployment scenarios from primary care to remote consultations.

How does the technology behind mental health AI work?

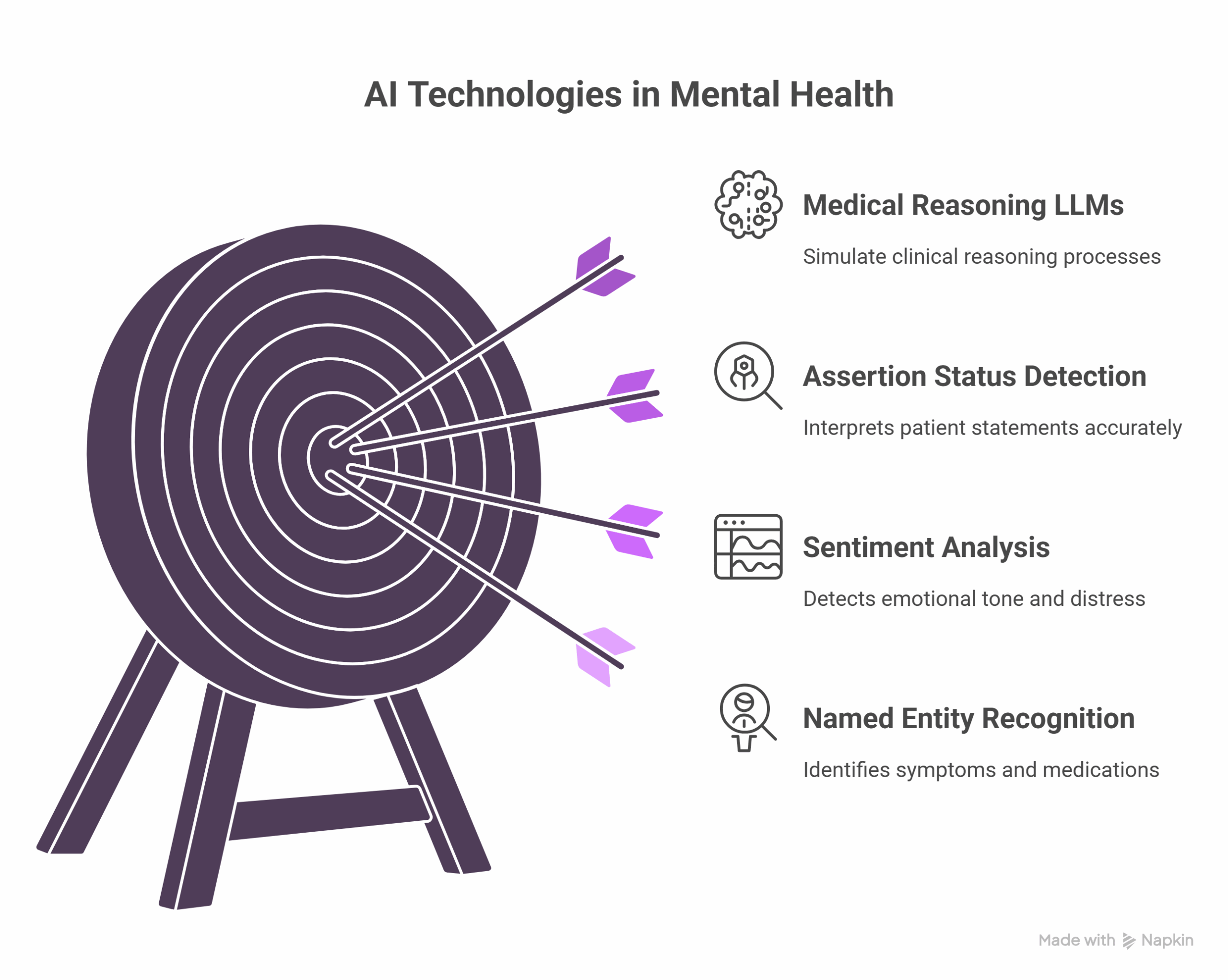

At its core, mental health-focused AI uses:

- Named Entity Recognition (NER): Identifies symptoms, medications, and disorders from clinical conversations, enabling early detection and detailed documentation of patient-reported concerns.

- Sentiment Analysis: Detects emotional tone and distress patterns by analyzing linguistic markers and changes in speech, providing real-time insights into mood fluctuations.

- Assertion Status Detection: Distinguishes affirmed, negated, or hypothetical conditions, helping clinicians accurately interpret patient statements and avoid misdiagnosis.

- Medical Reasoning LLMs: Enable clinical inference aligned with psychiatric guidelines by drawing from large-scale medical corpora and simulating clinical reasoning processes.

These technologies ensure patient context is preserved and that AI interactions remain clinically meaningful. The result is a system capable of not just identifying issues, but supporting decision-making and personalized care planning.

How is Conversational AI used for early mental health detection?

Several AI applications demonstrate strong diagnostic potential:

- Aiberry conducts automated mental health assessments using verbal and visual cues, offering rapid triage for large patient volumes without clinician burden.

- Limbic Access achieved 93% accuracy across eight mental disorders, validated in clinical settings, enabling scalable mental health triage in public health systems.

- Voice analysis tools detect anxiety and depression from speech patterns, including changes in tone, pitch, and pace, which may indicate distress.

- Social media mining identifies posts indicative of suicidal ideation or depressive episodes, helping to detect unreported or emerging mental health crises.

Studies published in Nature confirm AI’s potential in identifying mental health signals before clinical diagnosis, supporting proactive care and risk mitigation.

How can Conversational AI provide ongoing mental health support?

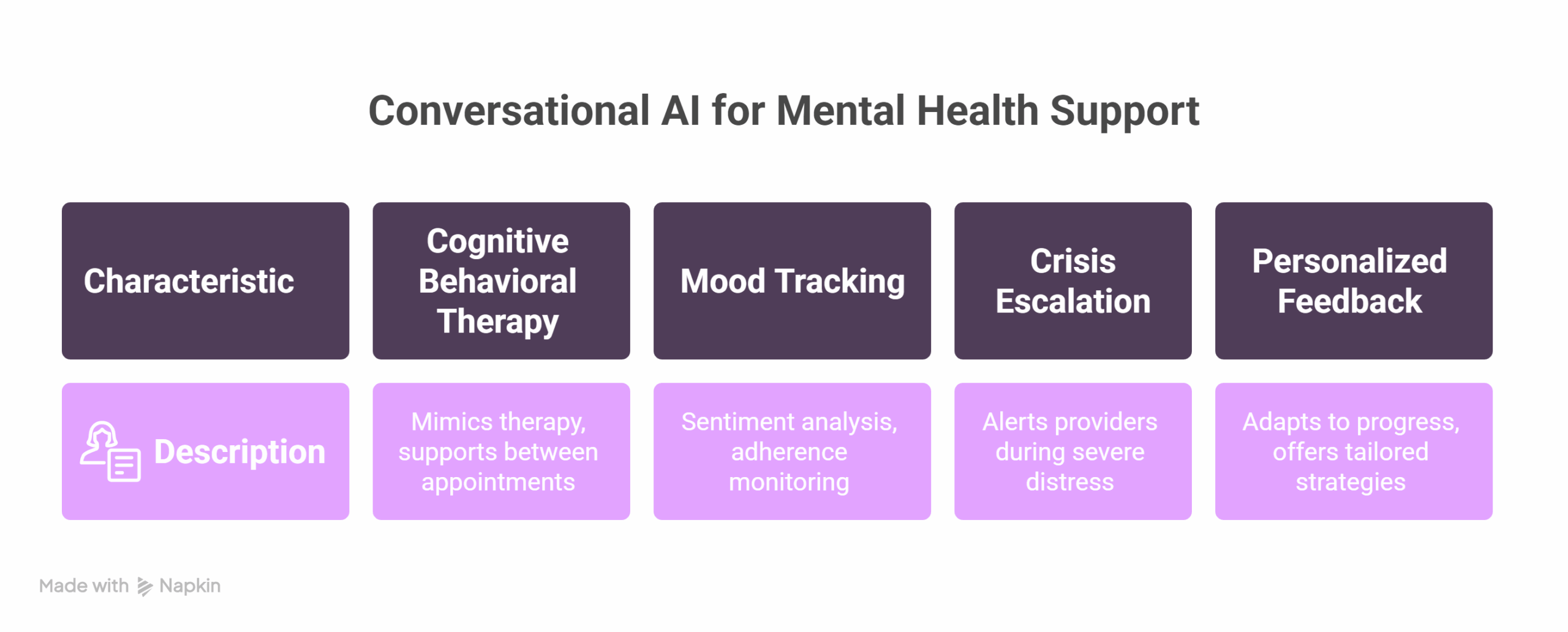

AI chatbots now deliver:

- Complement to Cognitive Behavioral Therapy (CBT): Through guided sessions and emotional check-ins that mimic traditional therapeutic methods and support patients between appointments.

- Mood tracking: With sentiment analysis and adherence monitoring, enabling ongoing visibility into patient wellbeing and treatment response.

- Crisis escalation: When patterns signal severe distress, AI can alert human providers or emergency services, ensuring rapid response in critical moments.

- Personalized feedback: Adapting to patient progress and preferences over time, these systems offer tailored recommendations and coping strategies.

These tools reduce the time between symptom onset and care, improving continuity and empowering patients with on-demand emotional support.

What are examples of real-world Conversational AI in healthcare?

- UK NHS adopted Limbic Access, improving recovery rates in primary care through consistent triage and early intervention.

- VCare Companion deployed robotic AI in assisted living, cutting assessment time by 40 minutes per patient and improving documentation efficiency.

- Automated systems lowered no-show rates by 27% through timely reminders, reducing scheduling friction and enhancing continuity of care.

These outcomes point to enhanced engagement, improved care delivery, and clinician time savings, key factors in improving system-wide mental health capacity.

What are the ethical and regulatory challenges in AI mental health?

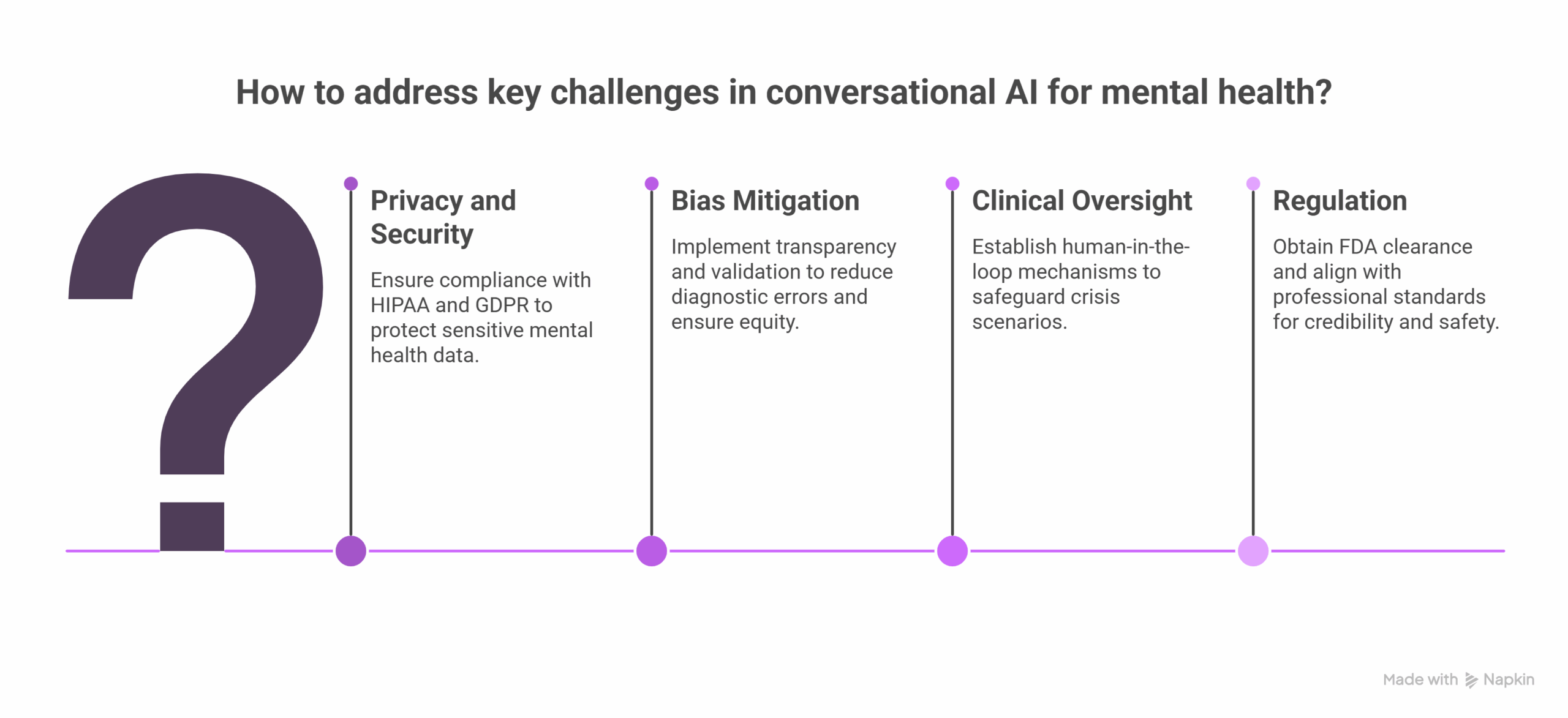

Conversational AI must address:

- Privacy and Security: Compliance with HIPAA and GDPR is essential, especially given the sensitivity of mental health data.

- Bias Mitigation: Model transparency and validation reduce diagnostic errors and ensure equity in care across populations.

- Clinical Oversight: Human-in-the-loop mechanisms safeguard crisis scenarios and prevent harm from autonomous decision-making.

- Regulation: Tools require FDA clearance for diagnostic use and must align with professional standards to maintain credibility and safety.

Ethical deployment ensures AI augments rather than replaces human care, prioritizing safety, accuracy, and accountability.

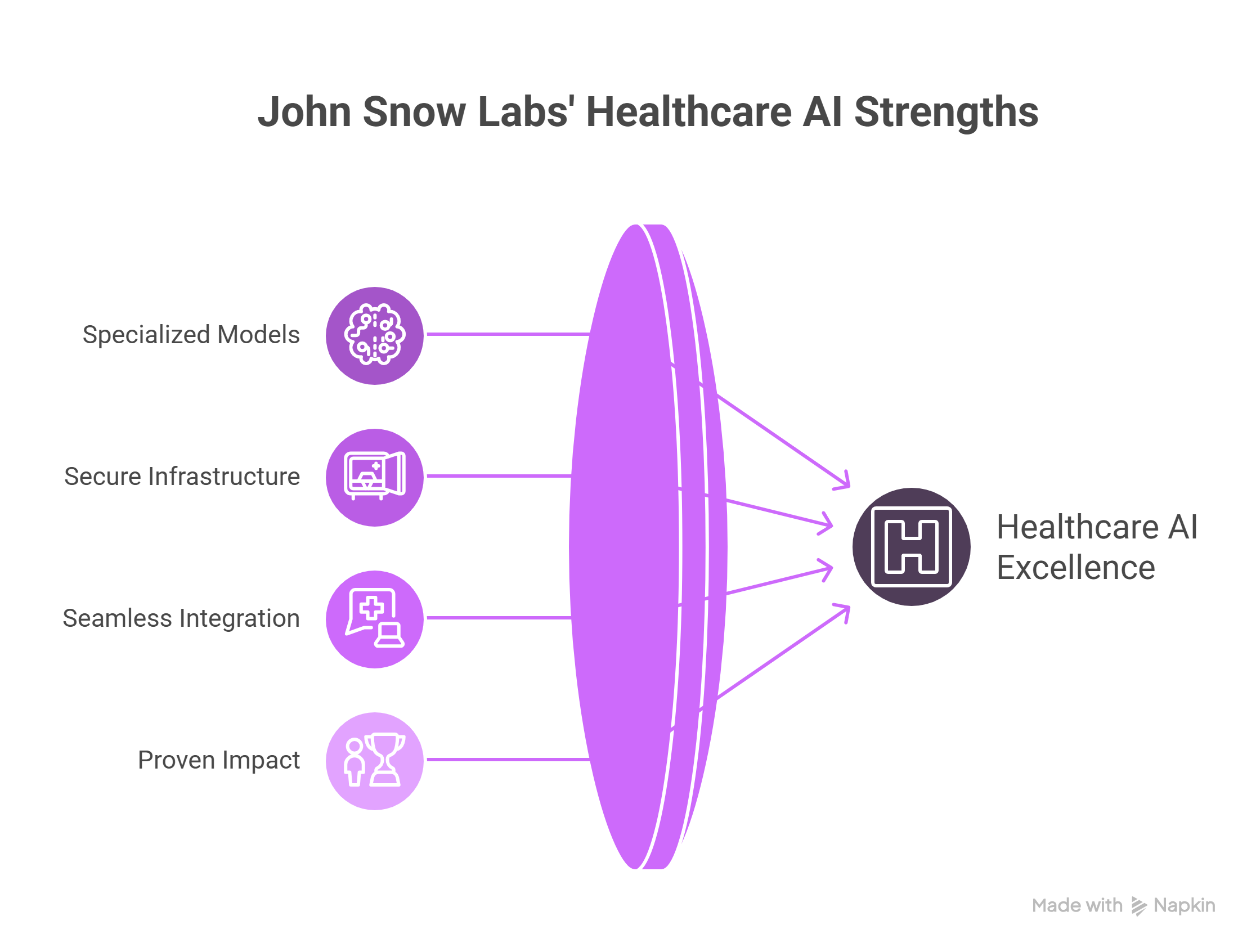

What makes John Snow Labs uniquely suited for healthcare AI?

- Specialized Models: Domain-specific LLMs trained on healthcare data, ensuring relevance and clinical accuracy.

- Secure Infrastructure: Enterprise-grade compliance and privacy protections to meet stringent healthcare standards.

- Seamless Integration: APIs and EHR compatibility allow flexible deployment across various health IT environments.

- Proven Impact: Used by major health systems and research institutions to support clinical and operational excellence.

With a focus on clinically validated AI, John Snow Labs supports real-world mental health transformation at scale.

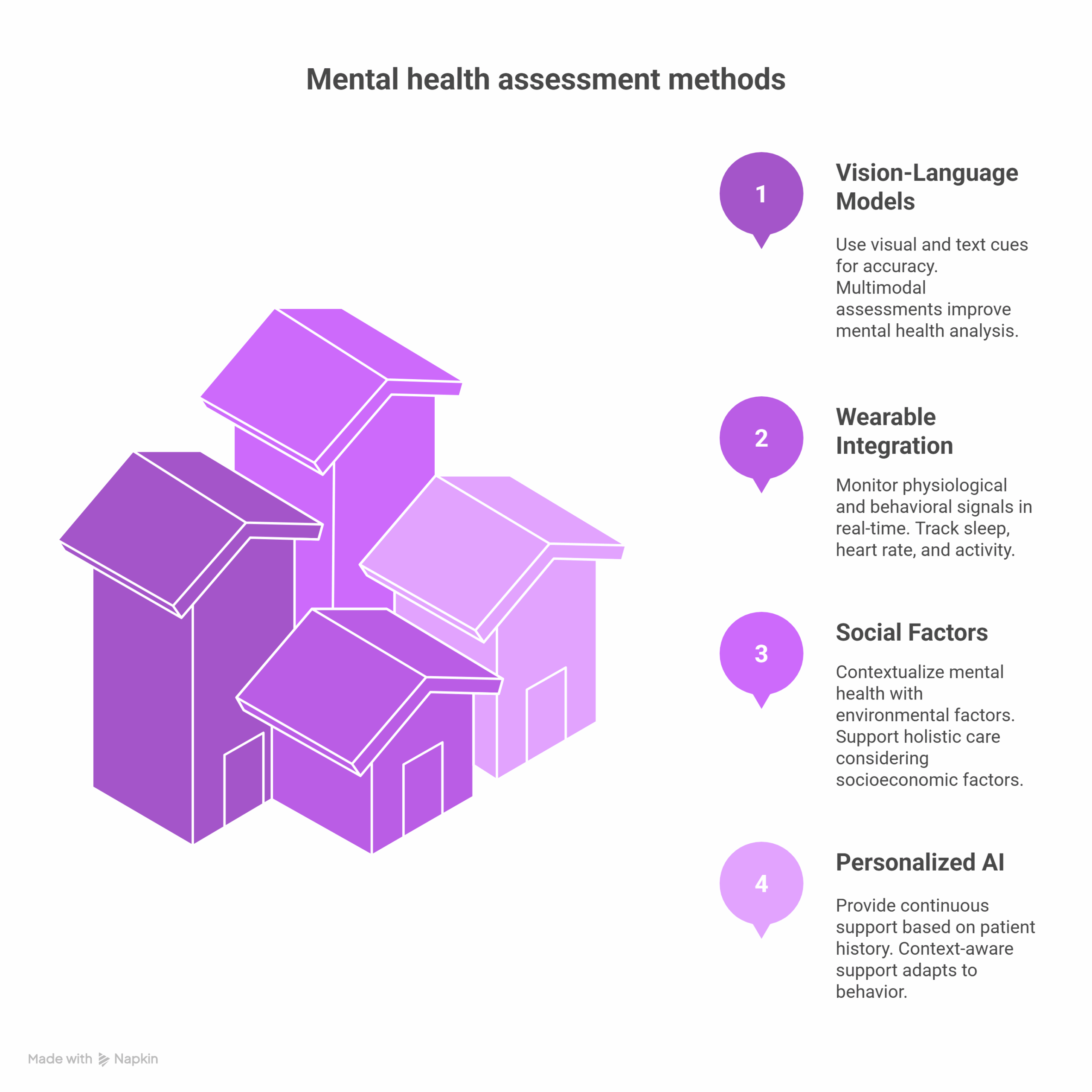

What innovations are shaping the future of mental health AI?

Emerging trends include:

- Vision-Language Models: Combining visual cues (e.g., facial expressions) with text for multimodal assessments that enhance accuracy.

- Wearable Integration: Monitoring physiological and behavioral signals (like sleep patterns, heart rate, and activity levels) for real-time insights.

- Social Determinants Integration: Contextualizing mental health within environmental and socioeconomic factors to support holistic care.

- Personalized AI Agents: Offering continuous, context-aware support based on patient history, behavior, and preferences.

These advances promise more comprehensive and individualized care, while paving the way for preventive mental health strategies.

How will Conversational AI transform mental health care?

Conversational AI increases access, enhances diagnostic precision, and supports long-term care. When responsibly deployed, it empowers clinicians and patients alike. John Snow Labs continues to lead in this domain by offering trusted, secure, and scientifically grounded AI infrastructure.

FAQs

What is the role of Natural Language Processing in mental health AI?

Natural Language Processing enables AI to understand clinical language, detect symptoms, and assess emotional states from unstructured text or speech.

Can AI chatbots replace therapists?

No, AI augments care but doesn’t replace human clinicians. It’s ideal for screening, support, and monitoring.

How is patient data protected?

John Snow Labs ensures HIPAA-compliant, encrypted systems with rigorous data governance.

Can Conversational AI detect suicide risk?

Yes, through social media analysis, voice patterns, and NLP, AI can flag potential crisis cases for escalation. John Snow Labs offers a specialized Suicide Detection Social Media model that analyzes language cues from public posts to identify signs of suicidal ideation. This model is part of the broader Healthcare NLP suite and is supported by the MOSAIC-NLP project, a collaboration funded by the FDA Sentinel Innovation Center. In that study, NLP applied to over 17 million unstructured clinical notes doubled the detection rate of suicidality and self-harm events compared to structured data alone. These findings illustrate the critical value of NLP in surfacing mental health outcomes that would otherwise remain invisible in EHR systems, empowering public health monitoring, early warning, and timely clinical outreach with high precision and contextual accuracy.

Supplementary Q&A

How do Conversational AI tools adapt to different cultures or languages?

Advanced models support multilingual content, enabling culturally sensitive care. They can localize responses and detect linguistic nuances relevant to mental health.

What research supports AI in early mental health screening?

Studies in journals like JAMA Psychiatry and Nature Digital Medicine have shown that AI can detect depression, PTSD, and anxiety from text, voice, and online behavior data.

What is the timeline for regulatory approval of AI in mental health?

The FDA has cleared some digital mental health tools. Full diagnostic approval depends on clinical trials, transparency, and long-term validation but typically takes 2 to 5 years.