For the past few years, the dominant assumption in healthcare AI has been one of universality: that a sufficiently powerful general-purpose language model, properly prompted, could handle clinical tasks well enough. The assumption was understandable. The largest general-purpose models are genuinely impressive, and the speed of their development has outpaced most predictions.

The assumption is now being tested against evidence, and the evidence is not favorable. In measurable, clinically consequential ways, general-purpose large language models consistently underperform domain-trained systems when applied to healthcare tasks. The gap is not marginal. It shows up in PHI detection accuracy, in physician preference evaluations, in cost per document processed, and increasingly, in the regulatory frameworks that are beginning to define what constitutes acceptable AI in medicine.

This article examines why generic AI is becoming structurally unsuited to the demands of regulated healthcare, what the performance data actually shows, and why domain-trained medical LLMs are positioned not just as a better choice, but as the only viable path for organizations that take compliance and clinical quality seriously.

The performance gap is larger than most organizations realize

The most direct way to understand the limitations of generic AI in healthcare is to look at what happens when it processes clinical data under controlled conditions.

A systematic assessment comparing de-identification performance across four systems (Healthcare NLP from John Snow Labs, Azure Health Data Services, AWS Comprehend Medical, and GPT-4o) evaluated each against 48 clinical documents annotated by medical experts. Healthcare NLP achieved a 96% F1-score for PHI detection. Azure Health Data Services reached 91%. AWS Comprehend Medical scored 83%. GPT-4o scored 79%.

That 17-point gap between the specialized system and GPT-4o is worth pausing on. In production, it means that for every thousand PHI entities in a document corpus, GPT-4o misses roughly 146 while Healthcare NLP misses approximately 9. In an organization processing hundreds of millions of patient notes, as Providence St. Joseph Health does, running 100,000 to 500,000 daily through their de-identification pipeline, the difference between 0.9% and 14.6% complete miss rates is not a rounding error. It is a compliance exposure of relevant scale.

The clinical performance gap extends beyond de-identification. The CLEVER study, published in JMIR AI in 2025, conducted a blind, randomized evaluation in which practicing physicians compared outputs from John Snow Labs’ Medical Model Small, an 8-billion-parameter domain-specific model, against GPT-4o across 500 novel clinical test cases spanning internal medicine, oncology, and neurology. Medical doctors preferred the domain-trained model over GPT-4o between 45% and 92% more often on dimensions of factuality, clinical relevance, and conciseness. On clinical summarization alone, physician preference for the domain-trained model was 47% versus 25% for GPT-4o, nearly double. These evaluations were conducted blind, with test cases created from scratch to prevent data contamination, and validated through intraclass correlation coefficient analysis for inter-annotator agreement.

The finding that carries the most strategic weight is this: the domain-trained model that outperformed GPT-4o has 8 billion parameters and can be deployed on-premise. The general-purpose model it outperformed is orders of magnitude larger and requires cloud infrastructure. Domain specificity, in other words, does more for clinical performance than raw model scale.

Why generic models fail structurally in clinical environments

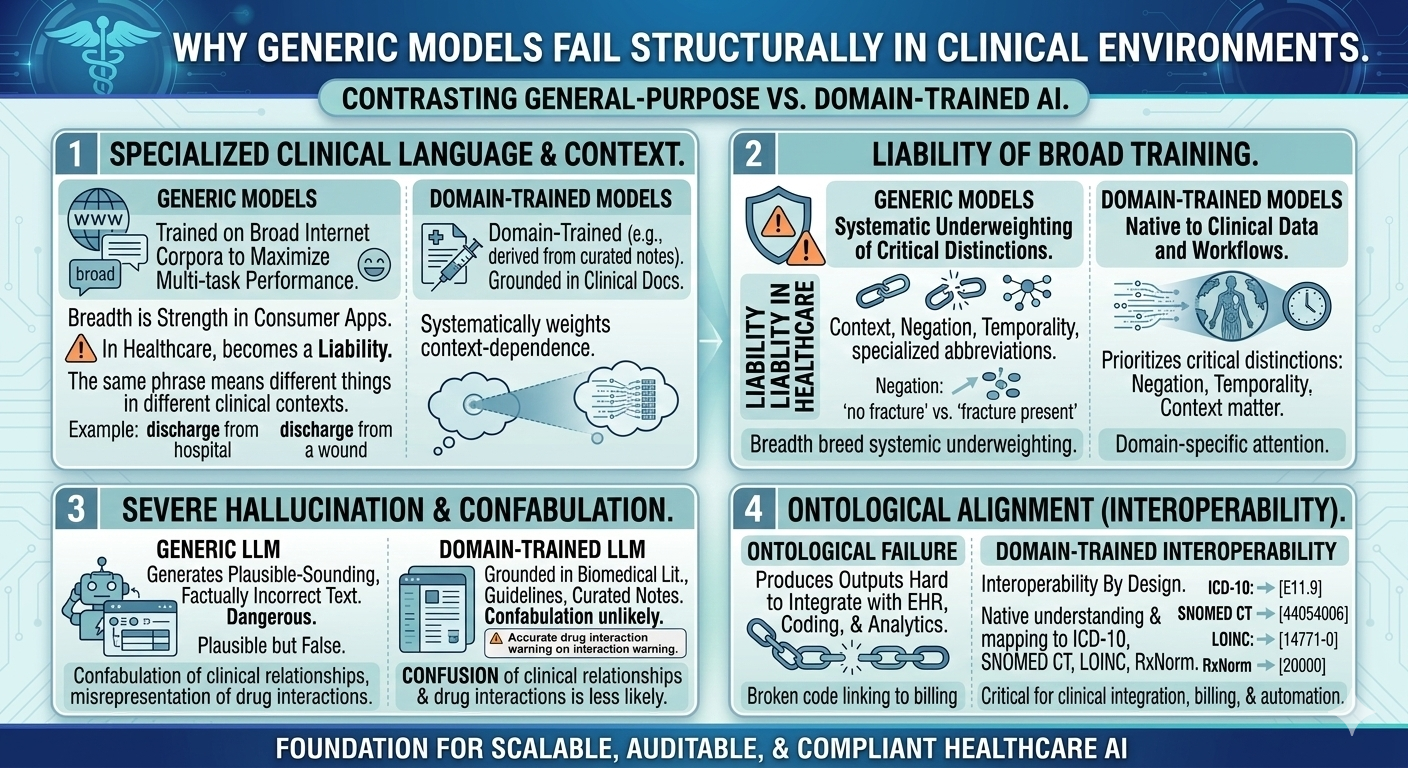

The performance gaps described above reflect structural differences between how general-purpose and domain-trained models are built, and what they are built for.

General-purpose LLMs are trained on broad internet-scale corpora to maximize performance across a wide range of tasks. That breadth is their strength in consumer and productivity applications. In healthcare, it becomes a liability. Clinical language is highly specialized: abbreviations carry precise meanings, negation and temporality matter enormously, and the same phrase can mean different things in different clinical contexts. A model that has processed vast amounts of general text but relatively limited clinical documentation will systematically underweight these distinctions.

Hallucination is a related and more serious problem. General-purpose models generate plausible-sounding text based on statistical patterns. In a clinical context, plausible-sounding text that is factually incorrect is not just unhelpful, it is potentially dangerous. A domain-trained model grounded in biomedical literature, clinical guidelines, and curated clinical notes is less likely to confabulate clinical relationships or misrepresent drug interactions because its training distribution more closely matches the domain it is operating in.

There is also the question of ontological alignment. Clinical workflows are organized around standard terminologies: ICD-10 for diagnoses, SNOMED CT for clinical concepts, LOINC for laboratory observations, RxNorm for medications. A model that does not natively understand and map to these terminologies produces outputs that are difficult to integrate with EHR systems, coding workflows, and downstream analytics. Domain-trained models embed this alignment by design.

The regulatory environment is tightening around exactly these gaps

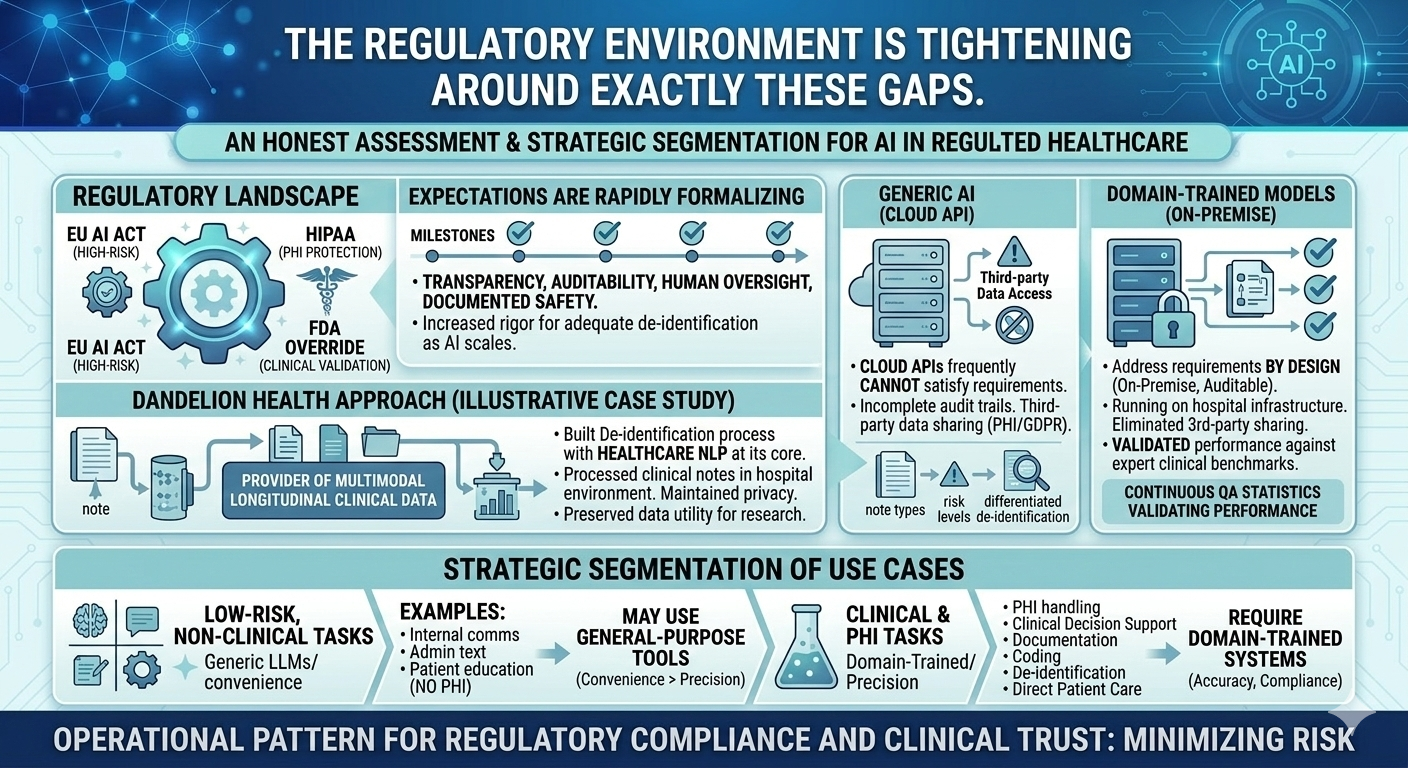

The performance and structural issues with generic AI in healthcare would be concerning enough in isolation. They become more urgent in the context of a regulatory environment that is rapidly formalizing its expectations for clinical AI.

The EU AI Act classifies medical-use AI under high-risk categories, with requirements for transparency, auditability, human oversight, and documented safety processes. HIPAA enforcement in the United States has long required demonstrable PHI protection, and the standards for what constitutes adequate de-identification are being applied with increasing rigor as AI processing of clinical data scales. FDA oversight of AI-enabled medical devices continues to evolve, with a growing emphasis on post-market surveillance, performance monitoring, and clinical validation.

These frameworks share a common logic: AI systems operating in high-stakes clinical environments must be auditable, must demonstrate their performance under representative conditions, and must provide mechanisms for human review and override. Generic LLMs, particularly those accessed via cloud APIs, frequently cannot satisfy these requirements. Audit trails are incomplete or inaccessible. Performance on clinical tasks has not been validated against expert annotation. Data processed through third-party APIs may not meet residency or handling requirements under HIPAA or GDPR.

Domain-trained models deployed within compliant infrastructure address these requirements by design rather than by retrofit. They can be run on-premise, eliminating third-party data sharing concerns. Their performance can be validated against clinical benchmarks. Their outputs can be logged, reviewed, and audited. These are not theoretical advantages, they are the practical prerequisites for deploying AI in regulated clinical environments at production scale.

What this means for health systems making AI investment decisions

The case for domain-trained medical LLMs is sometimes framed as a choice between accuracy and flexibility. That framing is misleading. The evidence shows that domain-trained models outperform general-purpose models on the clinical tasks that matter most, at lower cost per document, with better compliance characteristics. The trade-off is not accuracy versus flexibility, it is clinical-grade performance versus general-purpose convenience.

For health systems, the practical implication is a segmentation of use cases. Low-risk, non-clinical tasks (internal communications, general administrative text, patient education materials that do not involve PHI) may reasonably continue to use general-purpose tools. For any task that involves PHI, clinical decision support, documentation, coding, de-identification, or direct patient care, the performance and compliance requirements point clearly toward domain-trained systems.

Dandelion Health’s approach illustrates the practical architecture that responsible clinical AI requires. As a provider of multimodal longitudinal clinical data for healthcare innovators, Dandelion built its de-identification process with Healthcare NLP at its core processing clinical notes in hospital environments, maintaining patient privacy, and preserving the data utility needed for high-fidelity research. Their framework breaks down different note types by risk level, adapts the de-identification process accordingly, and uses QA statistics to validate performance continuously. That kind of differentiated, evidence-based approach to clinical AI infrastructure is what the current regulatory environment rewards and what generic AI tools are not architected to support.

Conclusion: the compliance argument and the performance argument point the same direction

The shift away from generic AI in healthcare is sometimes described as regulatory pressure forcing organizations to adopt less capable tools. The evidence does not support that description. Domain-trained medical LLMs are more accurate on PHI detection, preferred by physicians in blind clinical evaluations, cheaper per document at production scale, and more compatible with the audit and governance requirements that regulated environments demand.

The organizations that recognized this earliest have already built the infrastructure to act on it. Providence St. Joseph Health de-identified 700 million patient notes with a validated PHI leak rate <1%. Intermountain Health processes hundreds of millions of clinical documents with a 70% reduction in document review time. These outcomes are not the result of using the most powerful general-purpose model available. They are the result of using the right model, built for the right domain, deployed on the right infrastructure.

For health systems still evaluating their approach to clinical AI, the strategic question is not whether to move toward domain-trained models. The performance data, cost data, and regulatory trajectory all point the same direction. The question is how quickly to build the infrastructure that makes that transition durable: validated de-identification pipelines, structured data assets, compliant deployment environments, and human-in-the-loop governance frameworks. The organizations that invest in that foundation now will find it significantly easier to adopt whatever capabilities emerge next.

Frequently asked questions

How much more accurate is domain-trained Healthcare NLP compared to generalist LLMs for de-identification?

A systematic assessment on 48 medical expert-annotated clinical documents found Healthcare NLP achieving a 96% F1-score for PHI detection, compared to 79% for GPT-4o a 17-point gap. In terms of complete misses, Healthcare NLP missed 0.9% of PHI entities versus 14.6% for GPT-4o. At production scale, this difference translates to a materially different compliance risk profile. Full benchmark details are available at arxiv.org/html/2503.20794v1.

Do physicians actually prefer domain-trained medical LLMs over general-purpose models?

Yes, and the preference is substantial. The CLEVER study, a blind, randomized evaluation by practicing physicians across 500 novel clinical test cases, found that medical doctors preferred John Snow Labs’ Medical Model Small over GPT-4o between 45% and 92% more often on factuality, clinical relevance, and conciseness. For clinical summarization specifically, physician preference was 47% for the domain-trained model versus 25% for GPT-4o. The study is published in JMIR AI 2025 (doi.org/10.2196/72153).

Can a smaller domain-trained model really outperform a much larger general-purpose LLM?

The CLEVER study demonstrates exactly this. John Snow Labs’ Medical Model Small, with 8 billion parameters, outperformed GPT-4o, a substantially larger general-purpose model across clinical summarization, information extraction, and biomedical research QA in physician evaluations. The study concluded that domain-specific training enables smaller models to outperform much larger general-purpose systems on precision-critical tasks. The additional advantage is that an 8-billion-parameter model can be deployed on-premise, which matters significantly for PHI handling and data residency compliance.

What does regulatory compliance actually require from clinical AI systems?

Regulatory frameworks including the EU AI Act, HIPAA, and emerging FDA guidance on AI-enabled medical devices share several common requirements: demonstrable PHI protection, audit trails for data processing and model outputs, human oversight mechanisms, validated performance against clinical benchmarks, and documented risk assessment. Generic LLMs accessed via cloud APIs frequently cannot satisfy these requirements because performance on clinical tasks has not been independently validated, audit trails are incomplete, and data processed through third-party infrastructure may not meet data auditability and residency standards. Domain-trained models deployed on-premise within compliant infrastructure are architecturally better suited to meeting these requirements.

What should health systems do first when transitioning from generic to domain-trained AI?

The most effective starting point is a use-case audit: categorize current and planned AI applications by clinical risk level, PHI involvement, and regulatory exposure. High-risk applications as de-identification, clinical decision support, documentation, or medical coding should be prioritized for migration to domain-trained, compliance-ready systems. Organizations can explore John Snow Labs’ Healthcare NLP and Medical LLM capabilities at johnsnowlabs.com/healthcare-nlp/ and johnsnowlabs.com/healthcare-llm/, and review verified customer implementations at johnsnowlabs.com/customers/.