Institution-scale medical reasoning: what the field is actually learning

There is a version of the institution-scale clinical reasoning story that is easy to tell: AI systems that continuously reason over all of a hospital’s clinical data, surfacing risks in real time, detecting care gaps automatically, and supporting every clinical decision with longitudinal context.

That version is compelling, and it is not entirely wrong. The infrastructure to build toward it exists. Some components of it are already deployed in production at leading health systems. But there is a more useful version of the story. The one that acknowledges where the technology is genuinely mature, where it is still developing, and what the gap between demonstration and production actually requires.

That version is less tidy. It is also more honest and provides immediate, actionable guidance for health system leaders making real investment decisions instead of following hype.

This article attempts to tell that version. It examines what real-time institution-scale reasoning requires, what the field has learned from early implementations, where the genuine technical and organizational challenges lie, and what distinguishes the health systems making durable progress from those that have announced ambitious AI strategies without building the foundations those strategies require.

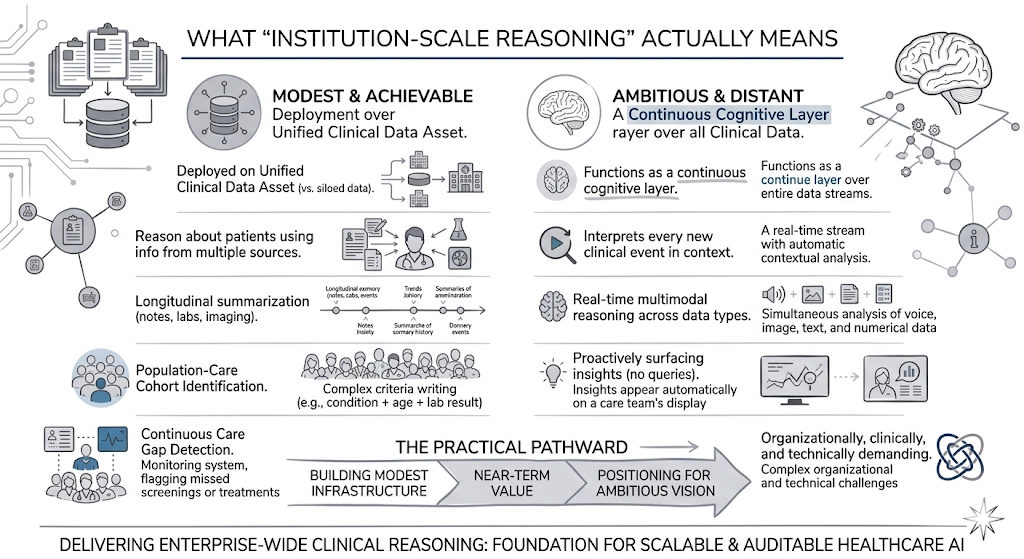

What institution-scale reasoning actually means when you unpack it

The phrase “institution-scale reasoning” carries a lot of weight. It is worth being precise about what it does and does not mean, because the ambiguity in the term is responsible for a significant amount of misaligned expectation.

At its most modest and most achievable, institution-scale reasoning means deploying AI that operates on a unified clinical data asset, rather than on siloed project-specific data sets, and can therefore reason about patients using information from multiple sources and time points simultaneously.

A summarization model that can draw on notes, labs, and imaging findings to produce a longitudinal summary rather than a single-document summary.

A cohort identification tool that can find patients matching complex multi-variable criteria across an entire population rather than within a single department’s records.

A care gap detection system that monitors the full patient population continuously rather than running periodic batch queries against structured data fields only.

At its most ambitious and most distant, institution-scale reasoning means AI that functions as a continuous cognitive layer over all clinical data, interpreting every new clinical event in the context of a patient’s complete history, reasoning in real time across multimodal data, and proactively surfacing insights to care teams without requiring explicit queries.

This version is technically conceivable but organizationally, clinically, and technically demanding in ways that are easy to underestimate from a distance.

Rather than trying to decide which ultimate vision to pursue immediately, most health systems should determine which components of the modest version to build now, as genuine infrastructure that delivers near-term value and positions the organization to build toward the more ambitious version as both the technology and the organization mature.

The answer to that question requires an honest assessment of where the field currently stands.

Where the technology is genuinely mature

Several components of the institution-scale reasoning stack have been validated at production scale and are no longer experimental.

De-identification at enterprise volume is one of the clearest examples.

A systematic assessment comparing de-identification performance found Healthcare NLP achieving a 96% F1-score for PHI detection, compared to 91% for Azure Health Data Services, 83% for AWS Comprehend Medical, and 79% for GPT-4o.

That performance level, at production scale, has been validated by Providence St. Joseph Health’s deployment: 2 billion patient notes de-identified with zero re-identifications, a 0.81% PHI leak rate validated across 700 million patient notes in production, and a 99%+ accuracy in PHI detection.

De-identification is a fully solved engineering problem when using regulatory-grade clinical AI.

Clinical entity extraction and normalization at scale is similarly mature.

Extracting diagnoses, medications, procedures, lab findings, and their relational and temporal context from unstructured clinical text, and mapping them to standard ontologies like ICD-10, SNOMED CT, LOINC, and RxNorm, is a capability that has been deployed at production scale across diverse document types.

MiBA’s NLP pipeline extracted 113.6 million entities and 29.2 million relationships from 1.4 million physician notes and one million PDF reports and scans, achieving a combined entity extraction F1 score of 93% and a relationship extraction F1 score of 88%.

Domain-trained medical LLMs for text summarization and information extraction are also in the mature category.

The CLEVER study’s blind evaluation by practicing physicians found that John Snow Labs’ Medical LLM Small, an 8-billion-parameter domain-trained model, was preferred over GPT-4o 88% more often on factuality and across other clinical relevance metrics over 500 novel clinical test cases.

Intermountain Health’s production deployment demonstrates the practical value: medical text summarization reduced document review time from ten minutes to three minutes, a 70% efficiency gain.

The model quality required for reliable summarization and extraction exists and is deployed.

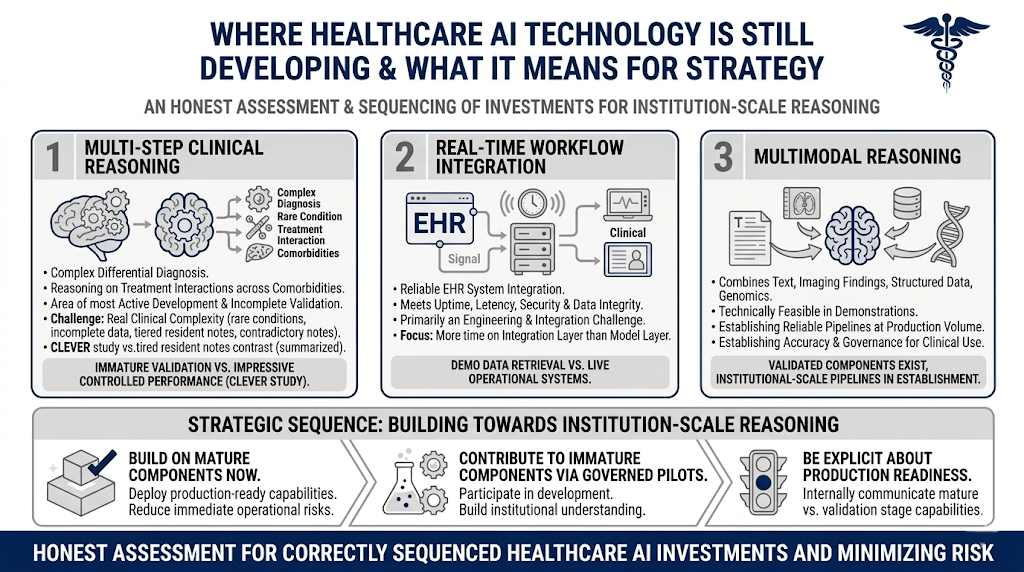

Where the technology is still developing and what that means for strategy

Honest assessment of the field requires equal clarity about where the technology is not yet mature, because misplaced confidence in immature capabilities is where ambitious AI strategies generate expensive failures.

Multi-step clinical reasoning, the kind required to derive a differential diagnosis from mixed data, or to reason about treatment interactions across comorbidities in a complex patient, is the area of most active development and most incomplete validation.

Medical LLMs have demonstrated impressive performance on structured clinical reasoning tasks in controlled evaluations.

What has not been established at production scale is whether that reasoning quality holds across the distribution of real clinical complexity that an institution-scale deployment would encounter: rare conditions, atypical presentations, incomplete data, contradictory clinical notes, and the full range of documentation quality that exists across departments and clinicians. Production reasoning operates on clinical notes written at 2am by a tired resident.

Real-time integration with live clinical workflows is a second area where the gap between demonstration and production is significant.

Retrieving data from an EHR for a demonstration is a different problem from integrating reliably with the operational EHR system in a way that meets the uptime, latency, security, and data integrity requirements of a production clinical environment.

This is primarily an engineering and integration challenge rather than a model capability challenge, but it is not trivial. Health systems that have successfully deployed AI tools within clinical workflows have typically spent substantially more time on the integration layer than on the model layer.

Multimodal reasoning, combining text, imaging findings, structured data, and genomics in a unified reasoning process, is technically feasible in demonstrated systems but has not been validated at institutional scale in ways that would support broad clinical deployment. The components exist but the pipelines that reliably combine them at production volume, with the accuracy and governance characteristics required for clinical use, are still being established.

Rather than avoiding institution-scale reasoning, organizations should sequence investments correctly. This means building on the mature components now, contributing to the development of immature ones through governed pilot deployments, and remaining explicit internally about what is production-ready.

What early implementations are actually learning

The organizations that have moved furthest toward institution-scale reasoning have generated a body of practical knowledge that is more valuable than any theoretical framework.

Several patterns emerge consistently.

The data foundation is always the bottleneck. Every organization that has attempted to build institution-scale AI has found that data quality, consistency, and governance limit progress long before model capability does.

Ohio State University’s research data infrastructure for over 200 million clinical notes required establishing de-identification with full auditability, information extraction workflows, and human-in-the-loop validation before any reasoning capability could be deployed reliably on top of it.

This pattern repeats across implementations.

Governance cannot be added after the fact. Organizations that build AI systems first and governance frameworks second consistently encounter the same problem: the audit trails, access controls, and validation documentation requiredfor compliance review do not exist because they were not designed in. Reconstructing them retroactively is expensive and sometimes impossible.

The organizations that have deployed institution-scale AI in regulated environments have uniformly established governance frameworks as prerequisites for first deployment, not as follow-on work.

Clinical adoption requires workflow integration, not workflow addition. AI outputs that require clinicians to move to a separate system, run a separate query, or interpret a separate report generate adoption resistance regardless of their accuracy.

The implementations that have achieved genuine clinical adoption, rather than technical deployment with low utilization, have integrated AI outputs into the systems and workflows clinicians already use, at the moments when those outputs are relevant.

This is harder to build than the model itself and is frequently underestimated in project scoping.

Human oversight scales better than anticipated when designed correctly. The concern that human-in-the-loop review would become unmanageable at institutional scale has generally not materialized in implementations that designed the oversight layer thoughtfully.

When AI outputs are routed to human reviewers only for edge cases and uncertain outputs, rather than requiring review of every output, and when the review interface is designed for efficiency, reviewer capacity scales with institutional volume without proportional growth in reviewer hours.

Ohio State’s pipeline for 200 million notes uses exactly this design: automated processing with structured human validation for the cases that require it, not for every document.

The honest case for investing now without overpromising

The honest case for investing in institution-scale reasoning infrastructure now centers on the components being built today. They function as genuine infrastructure that will support an expanding range of capabilities as both the technology and the organization mature.

A production-grade de-identification pipeline built now will support all AI use cases that require access to de-identified clinical data, extending far beyond the initial projects.

A regulatory-grade clinical NLP infrastructure that extracts and normalizes entities across document types provides the foundation on which new reasoning capabilities are built.

A governance framework established for the first production deployment will apply to every subsequent one, reducing the cost and complexity of each new capability deployed.

The organizations that will be positioned to deploy mature multimodal reasoning, real-time deterioration detection, and institution-wide care gap closure when those capabilities are fully validated are the ones that are building the datafoundations and governance infrastructure now.

The ones that wait until the full vision is achievable will find that the foundation work takes two to three years regardless of when they start and that starting later means being further behind at every point in the maturation curve.

The real case for urgency comes from the substantial time and infrastructure investment required to use these systems when they are fully ready. Organizations must build this incrementally now rather than attempting to catch up all at once.

Intellectual honesty as a competitive advantage

The health systems making the most durable progress toward institution-scale clinical reasoning share a characteristic that is easy to overlook: they are honest, internally and externally, about what is working, what is not, and what they do not yet know.

That honesty drives better investment decisions, more realistic timelines, and more durable clinical adoption than the alternative, which is pursuing an ambitious vision with insufficient acknowledgment of the gaps between demonstration and production.

The field is genuinely advancing. De-identification at production scale is solved. Clinical entity extraction at institutional volume is established. Domain-trained medical LLMs outperform general-purpose models on the tasks that matter most for clinical use.

The data foundations required for real-time reasoning are being built at leading health systems and the lessons from those builds are accumulating.

What the field is learning, in aggregate, is that institution-scale reasoning requires addressing infrastructure, governance, and integration problems. These components compound in the right direction when approached systematically, butdegrade when handled piecemeal.

The organizations that understand that distinction, and invest accordingly, build a durable competitive advantage.

Frequently asked questions

Which components of institution-scale clinical reasoning are production-ready today?

Three components have been validated at production scale and are no longer experimental.

De-identification: Healthcare NLP achieves a 96% F1-score for PHI detection in systematic benchmarks. Providence St. Joseph Health has deployed this technology to de-identify 2 billion patient notes with zero re-identifications, and has validated a 0.81% PHI leak rate across 700 million patient notes in production.

Clinical entity extraction: MiBA’s pipeline achieved a 93% combined F1 for entity extraction and 88% for relationship extraction across 1.4 million physician notes in production.

Medical LLM summarization and extraction: Clinicians preferred John Snow Labs’ Medical LLM over GPT-4o 88% more often on factuality in blind clinical evaluations. Intermountain Health achieved a 70% reduction in document review time in their production deployment.

Where are the genuine gaps in current institution-scale AI capabilities?

Three areas have significant gaps between demonstration and production-validated capability.

1) Multi-step clinical reasoning across complex patient presentations has shown strong performance in structured evaluations but has not been validated across the full distribution of real clinical complexity at institutional scale.

2) Real-time integration with operational EHR systems, as distinct from data retrieval for demonstrations, is primarily an engineering challenge that most implementations find more difficult and time-consuming than anticipated.

3) Multimodal reasoning that reliably combines text, imaging, structured data, and genomics at production volume with clinical-grade accuracy is technically demonstrated but not yet broadly deployed.

Organizations should be explicit internally about which capabilities fall into which category.

Why do organizations that start with governance as a prerequisite fare better than those that retrofit it?

Governance retrofitted onto running systems is expensive and sometimes impossible to complete fully. Audit trails that were not designed in cannot be reconstructed retroactively. Access controls applied after data has been processed do not retroactively protect data that was processed without them. Validation documentation created after a model has been running in production is less credible than documentation created before deployment.

The organizations that have successfully scaled institution-scale AI in regulated environments have uniformly found that the cost of establishing governance as a first-deployment prerequisite is lower than the cost of addressing governance gaps identified during compliance review of a running system.

How should health systems sequence investments in clinical AI infrastructure?

The most effective sequence starts with the mature components that deliver near-term value and form the foundation for future capabilities.

A production-grade de-identification pipeline is typically the first investment because it is the prerequisite for any AI use of clinical text, its performance is well-validated, and it delivers immediate value for secondary data use, research, and registry building.

Clinical entity extraction and normalization infrastructure is the second layer, because it transforms de-identified text into structured, queryable data that supports cohort identification, quality measurement, and downstream reasoning.

Domain-trained LLMs for summarization and information extraction come third, building on the structured data layer the first two components create.

Each layer compounds the value of the previous ones and reduces the marginal cost of adding new use cases.

What is the most common reason institution-scale AI initiatives stall after promising pilots?

The most consistent pattern is that pilots are built on project-specific data infrastructure that was not designed for reuse.

When the pilot succeeds and the organization tries to expand it, the data access, pipeline configuration, and validation work must be repeated for each new use case because nothing was built to be shared.

Organizations that escape this pattern build shared infrastructure from the first deployment: a governed data asset that multiple use cases can access, a modular pipeline architecture that can be extended without being rebuilt, and a governance framework that applies consistently across deployments.

The difference in cost and speed between the tenth use case deployed on shared infrastructure and the tenth use case deployed on project-specific infrastructure is substantial.