Annotation Lab is now the NLP Lab – the Free No-Code AI by John Snow Labs

Using the classical annotation workflow, when an annotator tool works on a task, a series of actions are necessary for creating a new annotation and submitting it as ground truth:

- Create the completion

- Save the completion,

- Submit the completion,

- Confirm submission,

- Load next task.

This process is adapted for more complex workflows and larger tasks. For cases of simpler projects, involving smaller tasks (e.g. one sentence, one tweet) the above steps can be simplified to speed up the annotation process.

Simplified workflows

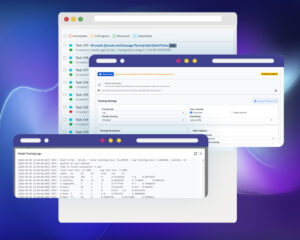

Since release 2.8.0, Annotation Lab offers support for simplified workflows. Annotators can now submit a completion with just one click.

- The project owner/manager can activate this option from the Settings of the Setup page (Project Configuration). Once enabled, annotators can see the submit button on the labeling page.

- A second option is available on the same Project Configuration screen for project owner/manager: “Serve next task after completion submission”. Once enabled, annotators can see the next task on the labeling page after submitting the completion for the current task.

Accept Predictions in one click

When predictions are available for a task, Annotator will be offered the option to accept the predictions with just one click and navigate automatically to the next task. When users click on Accept Prediction, a new completion is created based on the prediction, then submitted as ground truth and the next task in line (assigned to the current annotator/reviewer and with Incomplete or In progress status) is automatically served.

If the prediction is not correct, annotators still have the option to clone it and manually correct the resulting completion before submitting it.

Minor update on Shortcuts:

- Annotator can save/update completion using CTRL+Enter

- Annotator can submit completion using ALT+Enter

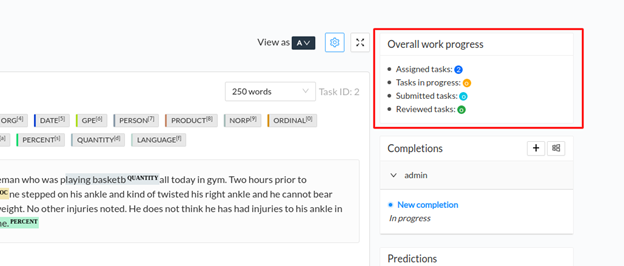

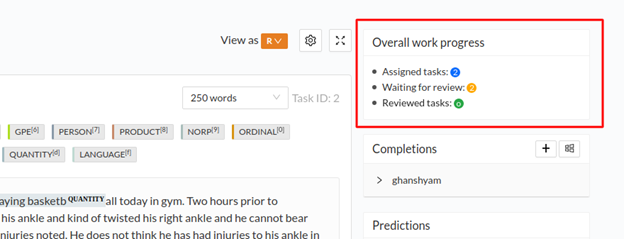

Overall work progress:

Annotator/Reviewer can see their overall work progress from within the labeling page. The status is calculated with respect to their assigned work.

- For Annotator View:

- For Reviewer View: